Programmable sketchbooks

Success of spreadsheets

Making end-user programming powerful and ubiquitous is a central goal within our lab’s broader vision of making better tools for thought. Spreadsheets are a widely successful end-user programming tool, and in particular we are inspired by their support of gradual enrichment and programming in the moment.

Spreadsheets support gradual enrichment by allowing you to quickly put text and numbers in arbitrary boxes as if using a pen and graph paper, do basic math as if using a calculator, move things into neat rows and columns when you find yourself making comparisons, and add more formula-driven cells and labels if/when necessary as your model evolves—incrementally building dynamic complexity over time. You might eventually polish the formatting, clearly label input cells, and turn the model into a reusable piece of situated software. Each bit of effort to refine the model is a small investment made in a moment when the payoff is immediate.

When software allows adding dynamic behavior as part of gradual enrichment, it supports programming in the moment. Spreadsheets support dynamic behavior with live, reactive, declarative formulas you can place anywhere you would otherwise have data. They treat data and formulas equally: where they appear in the interface, how they are edited, and how they reference each other. This interchangeability supports programming in the moment by allowing you to keep working with the same tools, in the same mindset—thinking only about the specific thing you are modeling—even though you have switched to programming. They don’t force you to stop and switch to your programmer hat.

Through this lens, spreadsheets stand out as one of the more successful and prevalent instances of end-user programming. They give a broad range of users access to computational power while maintaining flexibility through the use of the familiar and adaptable grid—what Bonnie Nardi calls a visual formalism.

Spreadsheets are immensely popular for uses that fit neatly within a grid of cells (and have been creatively misused enough to prove their flexibility), but they are not the end of the road for end-user-programmable media.

Despite the spreadsheet’s computational advantages, most people don’t reach for a spreadsheet the moment they want to think through a problem or communicate an idea.

Enduring value of pen and paper

Despite an overabundance of other tools, many people still turn first to pen and paper for ideating, note taking, sketching, and more.

There is something special that happens in the brain when making marks by hand, and a large body of research suggests it has various advantages, whether for learning and psychological development, retention of material, or activating more pathways in the brain.

What the hand does, the mind remembers.

We believe these attributes contribute to pen and paper’s staying power:

-

Flexible & free-form

While word processors constrain text to predefined lines, on paper you can write anywhere. You aren't limited to writing text either—you can draw diagrams, sketch images, and doodle in the margins. You can combine these styles of expression freely, without stopping to change tools or select formatting or ask a system for permission. -

Immediate

You can pick up a pad of paper and begin writing without hesitation, and without working through any system of menus or dialog boxes. This is essential for fluidly expressing and thinking through ideas—working at the speed of thought. This is also essential when integrated into a larger activity, like two people having a conversation over a pad of paper or a student taking notes alongside practicing an instrument. -

Intimate and tangible

You can touch and feel a pen and paper, hold them, and move them around—there is nothing mediating your interaction. You don't have to take care to avoid touching the wrong place and altering or losing your work, as is often the case with a digital tablet. You receive full tactile feedback even down to feeling the friction of your writing implement dragging across the paper's surface. This results in a strong sense of direct manipulation and working with the material. -

Informal & "sketchy"

The natural grain of digital media is to create precise and polished artifacts, but the fidelity of the tool you use should match the maturity of the idea you're working with. Nascent ideas are tiny, weak, and fragile. They require attention, nimbleness, and open-ended thought to survive. When a tool pushes you into inappropriate levels of precision, it can slow you down and suppress creativity: you want to quickly throw a box on the screen but by the time you've chosen the drop-shadow depth and rounded-corner radius, that whiff of an idea has dissipated. Handwriting allows looseness, quickness, and incremental refinement—attributes hard to come by in the world of computers. -

Connected with human life

Pen and paper are deeply entangled into our lives and shared cultures. Most people have been making marks on paper since infancy. Even if we don't consider ourselves to be talented artists, we all have distinct and personally meaningful ways of expressing ourselves with ink. Simply put, it feels good to mark on paper.

Digital pen and paper, in the form of tablet/stylus interfaces, have extended the power of their traditional counterparts with sophisticated editing interactions like copy-paste, undo, and infinite zoom, while trying to keep some of paper’s unique charms.

Shortcomings of digital paper

We should be thoughtful about basic advantages of paper that we can lose when moving to a digital tablet/stylus. For example, you can:

- spread papers out over a table, or across a wall

- thumb through a stack of papers, re-sort them, put them in different folders

- pass papers to a colleague and not worry whether the contents have synced across the network yet

- cut a piece of paper, rearrange it, and glue it to another

- use different tools (straight edge, compass, highlighter) mixed and matched all on the same paper without the designers of those tools needing to know about each other

- operate pens with remarkably low latency

- work with sheets of paper without irrelevant pop-up notifications, running out of batteries, or system crashes

- purchase sheets of paper extremely cheaply

We want a digital pen and paper that maintain as many of the above attributes as possible, but with all the benefits of a dynamic digital medium. The most important being programmability—describing new behaviors for a sketchbook to take on, automating and processing and simulating.

Research questions

What does it mean for a sketcher to program as they sketch? How might this new power aid them in their life and work? While we can’t foresee all the possible uses of a tool that doesn’t exist today, we can take inspiration from uses of traditional pen and paper to imagine a few use cases that hint at a larger set of possibilities:

- An event planner draws up a list to pair things that need to be done with people available to do them. After a bit of connecting lines and boxes, they add some behavior to highlight the remaining unmatched people/items to make them easier to see. Later on, they need to do this again for a different event, so they turn this first version into a template for easy re-use.

- A furniture designer sketches patterns for a table leg. After they decide they love a pattern that repeats a vine figure in a spiral down the leg, they automate the hand-drawn spiral pattern so they can try out different vine shapes instantly as they sketch. This helps them explore the design space quickly and stumble onto entirely new designs.

- An engineer on a circuit-design team is trying to illustrate to a colleague how their signal processing might be flawed. They roughly sketch what an incoming signal might look like, and then program in a model of what the circuit will do. The engineers take turns sketching on the page, trying out different signals and models, coming to a shared understanding of the problem.

These three examples share an interesting feature—they all illustrate programming in the moment while sketching. This is our ambitious vision for a programmable sketchbook.

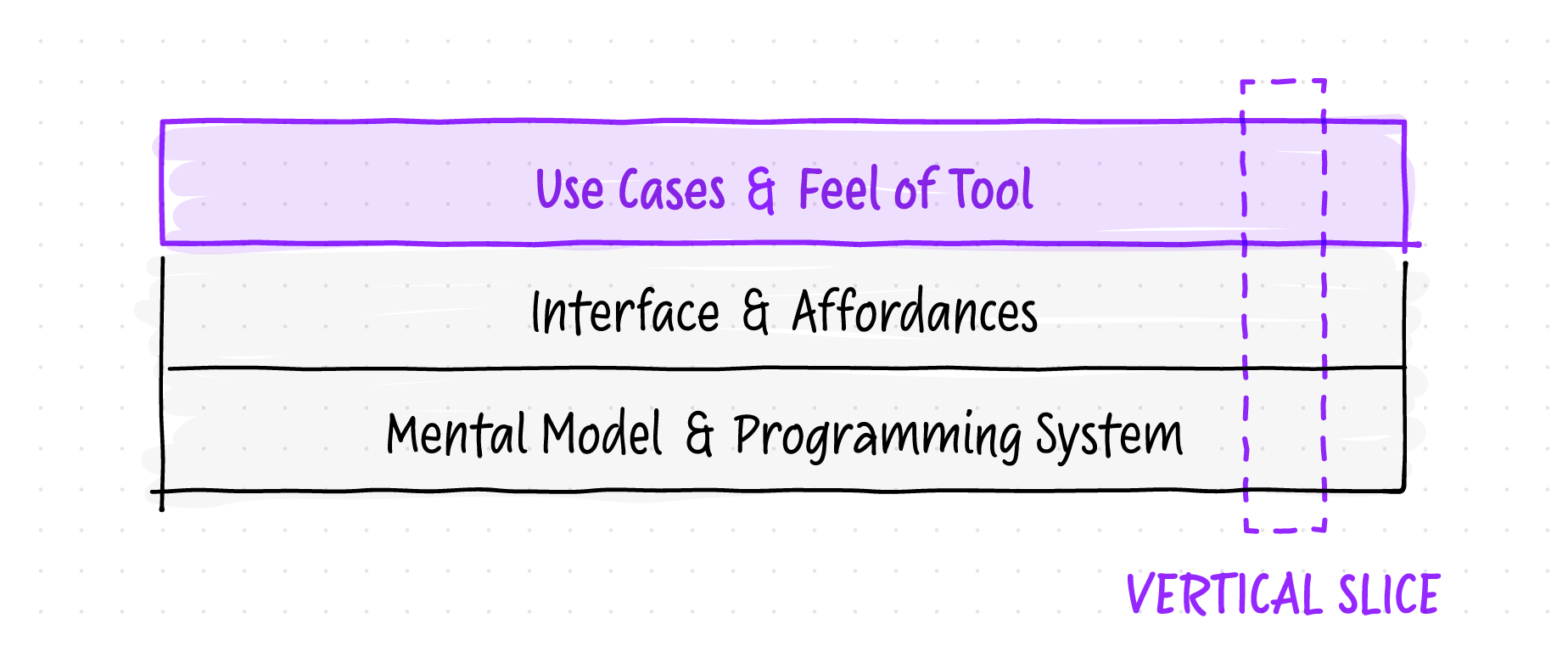

However, this vision raises multiple layers of research questions at once:

- What mental model or programming concepts are the right fit for interacting with hand-drawn marks?

What features of a formula or programming system are important? Is a formal language even exposed?

- How should an interface be designed for programming in the moment while inking?

How do you interact with dynamic drawings? How do you see relationships between ink and the program? What affordances could make pen and tablet programming ergonomic? How do you edit a program "with pen in hand"?

- What might you make with a programmable sketchbook?

What are the most interesting use cases for this tool and how will it feel to interact both with the tool and with your creations? Which uses should the natural grain of this tool encourage?

A common research approach would be to start by designing a programming system from first principles. But because we believe the uses for this tool will be novel—and therefore unforeseeable—we don’t want the design to arbitrarily constrain the potential usage space.

Consequently these questions form a dependency stack in the reverse order: the use cases and aspirational feel of the tool determine the right design for the programming/editing interface, and in turn the way you interact with the models you build determines the right mental model and formula/programming system design.

If we want to start at the beginning of the dependency chain to explore use cases and the feel of a tool like this, we need to build a vertical slice through this whole stack to produce a working prototype we can actually play with.

For the bottom two layers we chose naive/incidental solutions—whatever was pragmatic, expedient, or best guess from our previous research experience in this area—so we could spend most of our time on the top layer.

Project goals

The essence of the Inkbase project was to experience that moment of making a sketch, enhancing it with dynamic functionality, and interacting with the result.

We hoped to unlock the next phase of this line of research by discovering interesting use cases, starting to understand the required ergonomics, and developing better intuition for how the tool should feel.

Prior art

While we haven’t seen work that directly achieves Inkbase’s goals, there has been a plethora of relevant work over the years, spanning from the 1960s to the present. A selection of projects we have taken ideas and inspiration from:

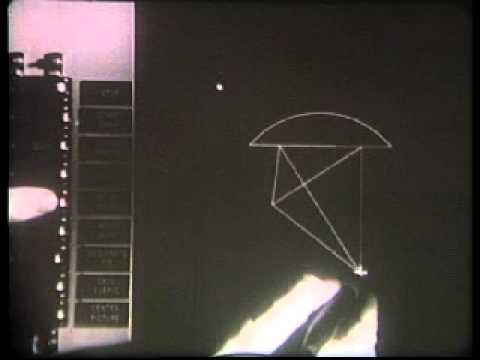

Sketchpad — Ivan Sutherland — 1963

(paper, video)

Sketchpad allowed the user to draw and manipulate dynamic models directly using a "light pen". Even with the rudimentary hardware/software available at the time, it achieved a fluidity and level of direct manipulation in some ways unrivaled today. Creation of dynamic relationships/constraints directly with the pen is aspirational for Inkbase, though constraint solving is not currently implemented (explored in prior lab research).

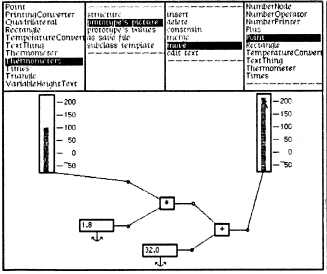

ThingLab — Alan Borning — 1979

(video, tool, code, paper)

ThingLab is a constraint-based visual model builder, extending Ivan Sutherland's ideas in Sketchpad. One of our favorite and most inspiring tools, it allows a model to be made by drawing it on the canvas with components that are easy to compose and also easy to create.

Viewpoint: Toward a Computer for Visual Thinkers — Scott Edward Kim — 1988

(paper, video)

Viewpoint is a demonstration that a simple text and graphic editor can be built by drawing it, and that this editor can be used to build itself. Inkbase takes inspiration from the goal that strictly everything is on the canvas, always visible, and that a wide range of functionality can be built using a small number of primitives.

INK-12 — Koile, Rubin, et al — 2010

(video, info, papers)

INK-12 is investigating how a combination of digital tools and freehand drawing can support teaching and learning mathematics in upper elementary school. Most interesting to us is the use of the computer to provide mental bookkeeping—helpful annotations and checking of the user's math while still requiring the user to solve the problem themselves. More on this in our findings.

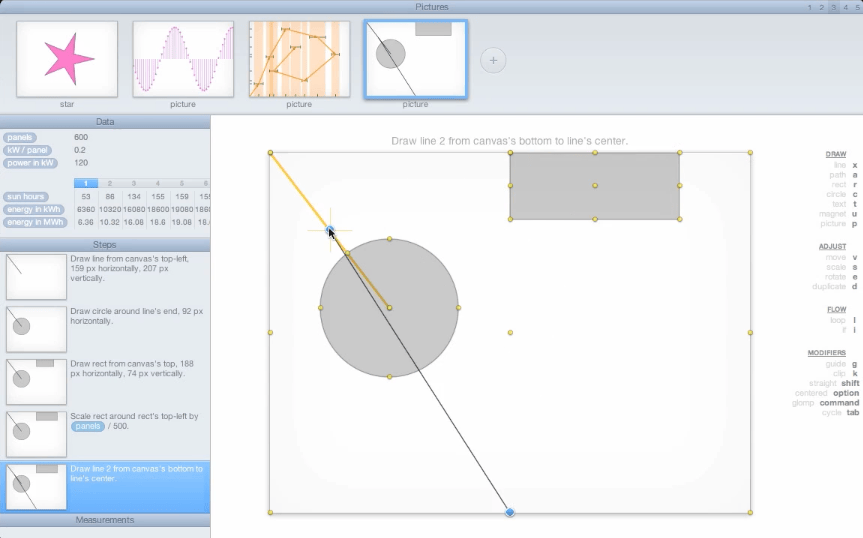

Drawing Dynamic Visualizations — Bret Victor — 2013

(video, notes)

DDV is a prototype system for making dynamic visualizations by drawing them. The user can draw and manipulate objects on the canvas and parameterize these historical actions to create imperative programs. DDV is inspiring in its delivery of true "programming in the moment" while drawing.

Dynamicland — Bret Victor, et al — 2013

(site, notes from Omar Rizwan)

Dynamicland is a deeply inspiring new computational medium where people work together with real objects in the real world. Ordinary physical materials are brought to life by technology in the ceiling. Every scrap of paper has the capabilities of a full computer, while remaining a fully-functional scrap of paper. Dynamicland has influenced our thinking, particularly on programmable live objects and spatial relations.

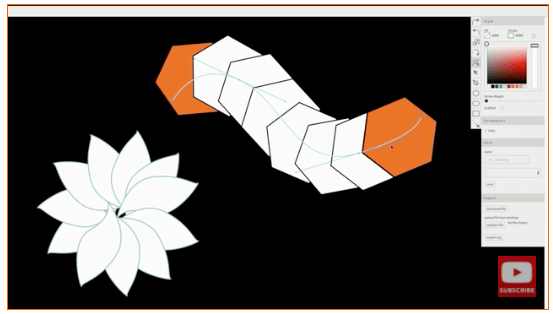

Para — Jennifer Jacobs — 2014

(video, tool, code)

Para is a prototype digital illustration tool for making procedural artwork. Through creating and altering vector paths, artists can define iterative distributions and parametric constraints. Again, we are inspired by the creation and editing of dynamic relationships via direct manipulation.

Related: Dynamic Brushes

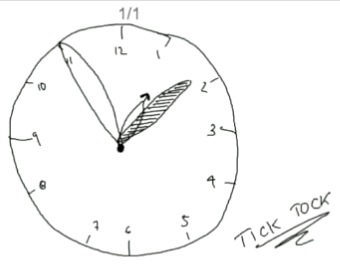

TickTock / Pronto — Dan Ingalls — 2016

(video: TickTock example, video: Pronto talk)

TickTock is just a tiny demo in Dan Ingalls's Pronto talk where he brings a hand-drawn clock to life by manipulating the drawing and then combining the history of his actions with some pre-made widgets—programming by example. We find this an inspiring case of true programmable ink.

Object-Oriented Drawing — Haijun Xia — 2016

(paper, video)

OOD is a tool for drawing that gives the properties of canvas objects (e.g. color, stroke, etc.) spatial representations—moves them into the same workspace as the ink marks so they can be interacted with directly. We are inspired by the way some things that require code in Inkbase (e.g. linking the color of two objects) can be done via direct manipulation in OOD.

Related: DataInk, DataToon

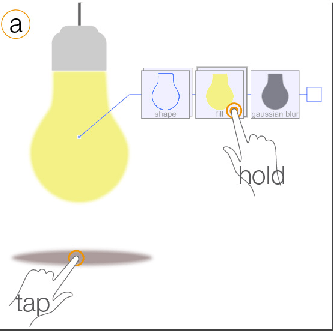

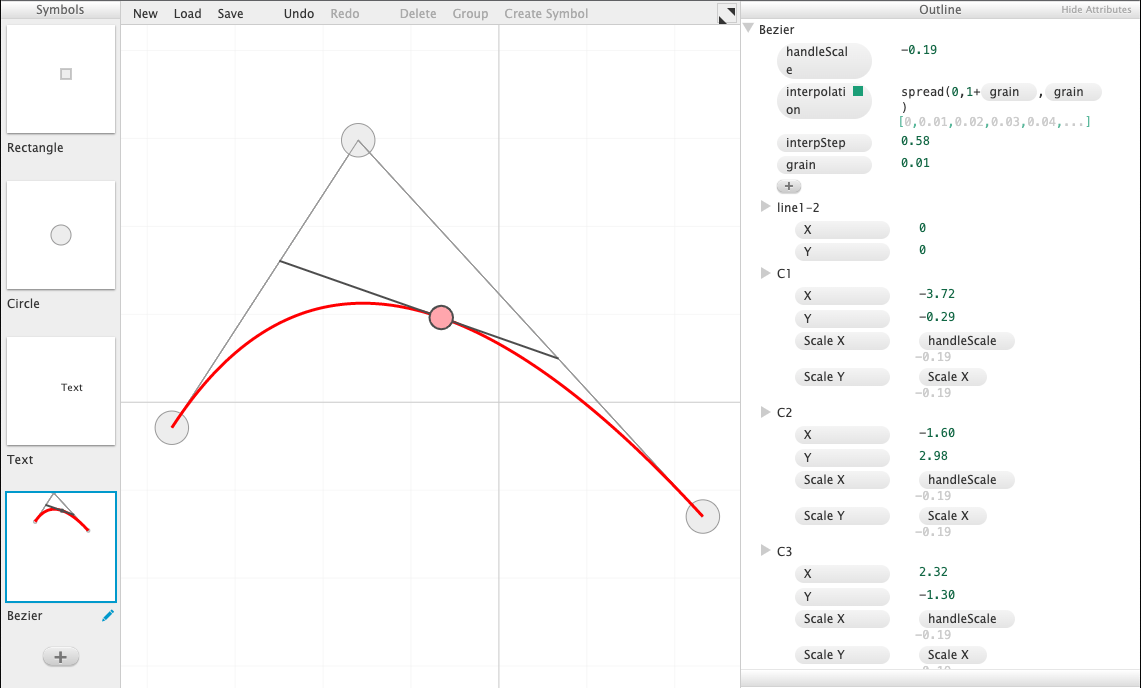

Apparatus — Toby Schachman — 2016

(tool)

Apparatus is a unique and powerful hybrid graphics editor and programming environment for creating interactive visualizations. We love the approach of combining direct manipulation of the canvas with a declarative, reactive formula system.

Related: Cuttle is a more refined product version of these ideas.

Chalktalk — Ken Perlin — 2018

(paper, video, code)

Chalktalk enables a presenter to create and interact with animated digital sketches while presenting. Chalktalk mainly uses freehand drawing as a way to invoke and interact with preprogrammed objects. In comparison, Inkbase pushes toward private notebook use, working directly with ink as the primary material, and more accessible programmability/programming in the moment (see microworlds below for more thoughts on this).

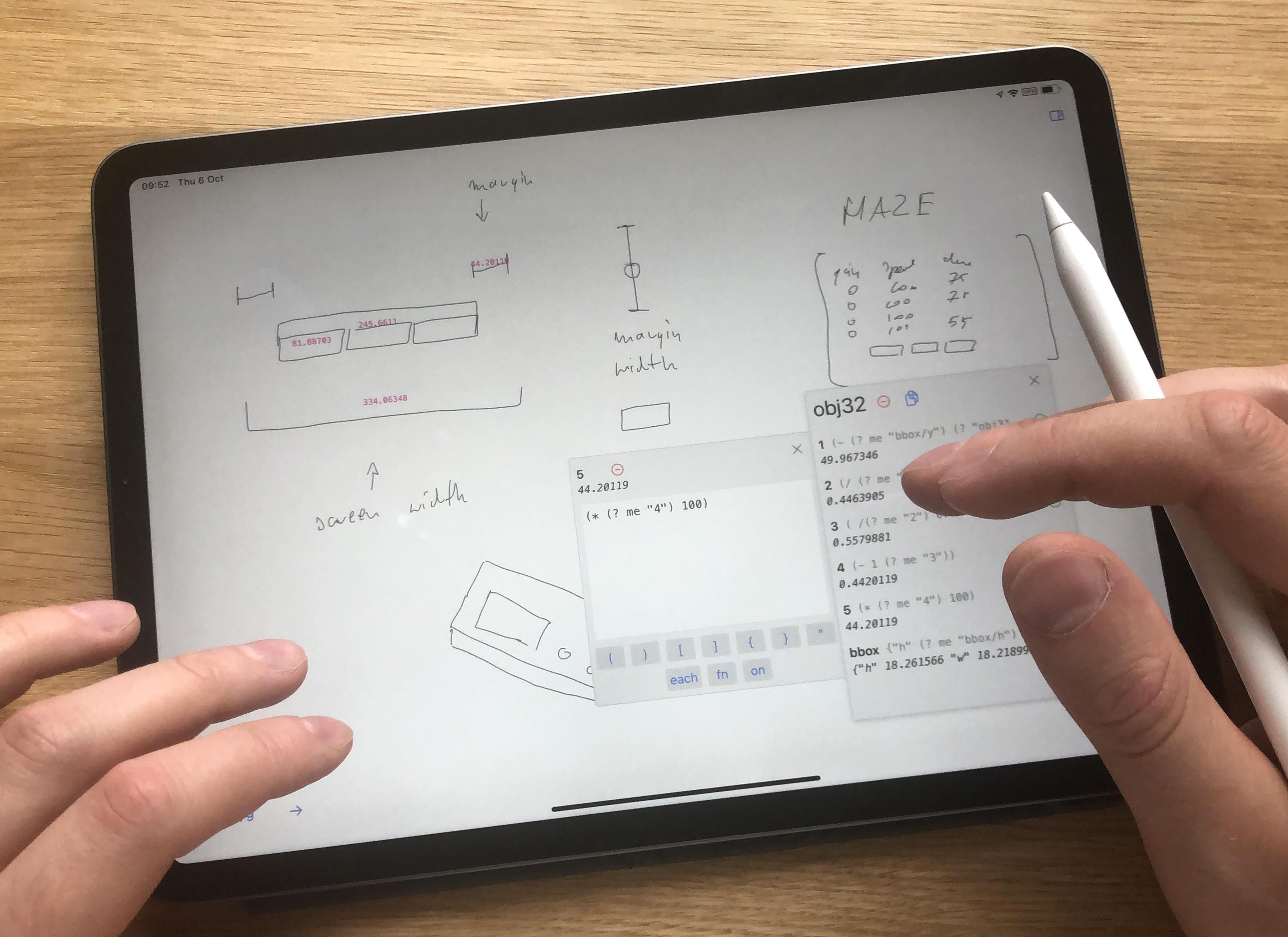

Inkbase

To answer the research questions above, we built Inkbase, a native Swift application for the Apple iPad and Pencil, implementing a programmable sketchbook:

A handwritten task turning green when its box is checked.

Below is a basic primer on Inkbase’s features followed by a sampling of things we made with it.

Inking and selecting

To support gradual enrichment, Inkbase is a sketchbook first—by default the pencil inks on the canvas and touch/drag does nothing:

Inking normally on the canvas using the pencil.

Selection is a quasi-mode—while holding two fingers down on the canvas, touch an object to select or use the pencil to lasso a selection. Ink can be moved around the canvas by dragging it with a finger or pen while holding down an additional finger, or by just dragging objects that are already selected:

Selecting strokes using the pen and moving them with a finger.

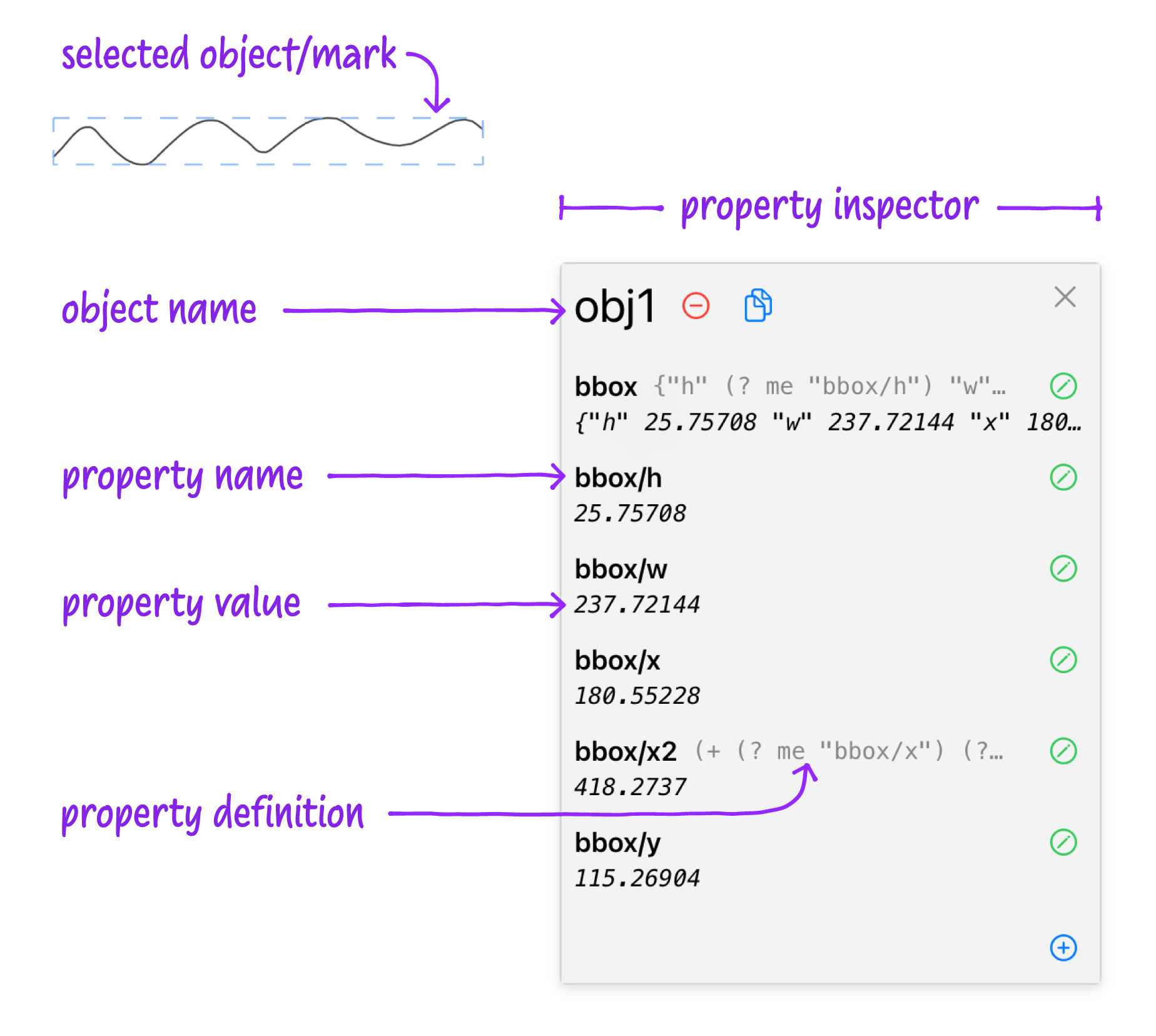

Objects and properties

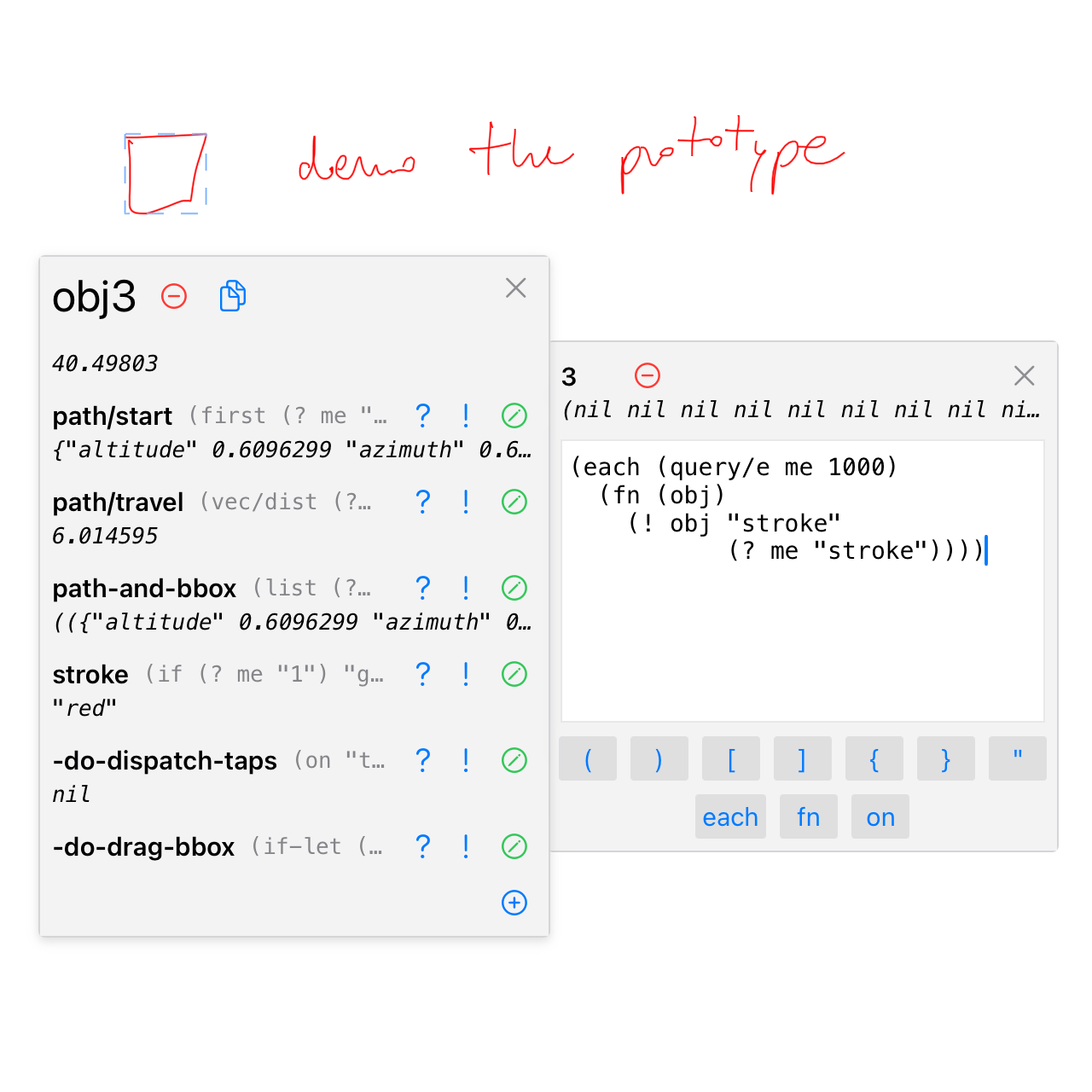

Inked paths are the basic building blocks of everything you can do in Inkbase. We wanted them to be as open-ended and malleable as possible—they are live vector objects with dynamic properties. Selecting an object opens its property inspector:

Inspecting properties of an object.

All properties are live—including the original path as vector data—and can be inspected and edited:

Editing properties of an object. Changing a mark’s width (bbox/w) is reflected immediately on the canvas.

Additional arbitrary properties can be added:

Creating a new property 1 that holds true/false.

Dynamic behavior

Properties can have a value or can be an expression:

Setting the stroke property to be red or green depending on the value of property 1, inserted using the ? button.

We wanted the reactivity of spreadsheets where the dependencies between properties are tracked by the system and values are automatically recalculated when necessary.

Inkbase uses a homegrown flavor of Lisp. In addition to being simple to implement (which allows us to easily interoperate between the core engine functions and end-user code), everything in Lisp is an expression (everything returns a value), which composes nicely with the idea of reactive properties. This is similar to spreadsheet formulas which always return a value into the cell.

Accessing properties is done with the ? function (with a shortcut button available in the Inspector for quick insertion of a property reference).

Property Lookup using (? object property) returns the value of the given property on the given object, and records the dependency in our reactive dataflow system. Now when that value changes, anything depending on it will be updated as well. This is analogous to referencing another cell in a spreadsheet formula using its name, e.g. =A6.

Inkbase objects always exist somewhere on the page. This makes accessing them by their position very natural.

We created a spatial query system where the user can ask for objects in a specific region, inside a specified path, or in a general direction (e.g. “to the right of…”):

Using (query/overlaps me) to find any strokes intersecting the checkbox. The resulting set is initially empty (), then obj4 appears in the set when the check mark is drawn.

The results of these queries are also reactive (refresh based on changes on the page), so they compose with the rest of the property system:

Setting value of property 1 based on results of spatial query. Combined with the previous stroke expression, now the color of the box changes when a mark is made inside.

Thus we have programmable ink:

Playing with a mark whose stroke color is reactive.

Lastly, our reactive system also allows for side-effects—pushing new values into the dependency graph “from outside”, using the ! function.

Property Setting using (! object property value) sets the given property on the given object to the given value. This is equivalent to the user opening a property inspector and typing a value, but done programmatically.

We can use this, for example, to make all of the marks to the right of this box react to its stroke state:

Using the ! expression to set the stroke property of all of the marks to the right (query/e for “East”).

All of the marks to the right now have a reactive stroke also.

Sampling of use cases

“What is this for?” is a central question for this kind of work. We built models with Inkbase in the pursuit of our central goals of discovering interesting use cases and gaining intuition for the ergonomics and feel of the tool. Below is a sampling of these, each pointing to a different type of use which feels promising and underserved by existing tools.

Mental bookkeeping

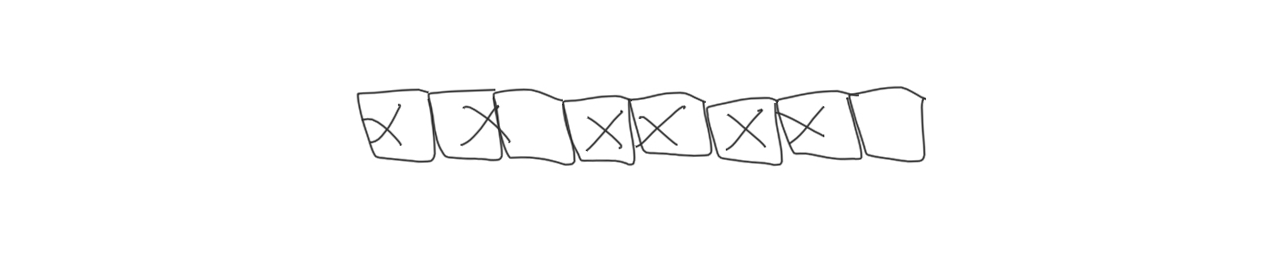

A class of use cases we find interesting are common uses for notebooks/sketchbooks where the system can provide helpful automation or bookkeeping. A basic example is the habit tracker, a motivational tool for establishing or maintaining habits. They revolve around the emotional and visual satisfaction of completing an activity and lengthening the streak, or the discomfort of breaking one.

You could make a habit tracker to, for example, increase your consistency at exercising every day. You write the name of the habit, draw boxes representing each day, and mark each box if you completed a workout that day:

Marking days completed in an exercise habit tracker.

The key feature of a habit tracker is that you can easily visualize the length of the current streak. You could add behavior to your drawing to color the boxes of the current streak red so it’s easier to see—particularly helpful once you’ve been tracking for a while and the page is full:

Boxes that are part of the current “streak” are highlighted red as you draw, for easy visibility.

This can be done the same way we showed in the Inkbase primer above, by setting the box stroke property to red or black depending on whether it is part of a streak, and determining that with a set of spatial queries (see discussion of spatial queries for more implementation details).

Now when you miss a day and the streak resets, it’s clearly visible (and extra painful—more motivating):

A streak being reset because a day was missed is now painfully visible.

You could make a streak even more clear by drawing a bracket underneath:

Drawing a bracket underneath the current streak.

You could make the size and location of the bracket dynamic, so it changes in real-time as you use the habit tracker:

The bracket underneath now responds to changes in the current streak as you draw.

This can be done simply by setting the bracket’s left and right to the same value as the left of the leftmost streak box and right of the rightmost streak box, respectively. When you mark a box, it triggers recomputation of the streak, which trigers recomputation of the bracket’s bounding box, which automatically transforms the bracket.

Model building

Paper notebooks are a great place to sketch out designs, though they remain static. The only way to adjust them is to draw them again, and there’s no way to simulate behavior. Sometimes you want to build a model and play with it to better understand the constraints or behavior of a system.

Ivan Sutherland’s Sketchpad was the first GUI application and introduced many important ideas into the fields of computer science, HCI, and CAD. It’s notable that the origins of interactive computing come from graphical applications, direct manipulation, pencils and tablets—not keyboards, mice, or CLIs.

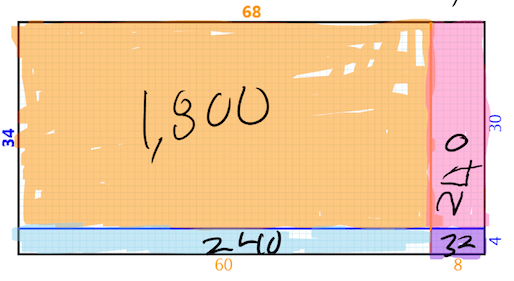

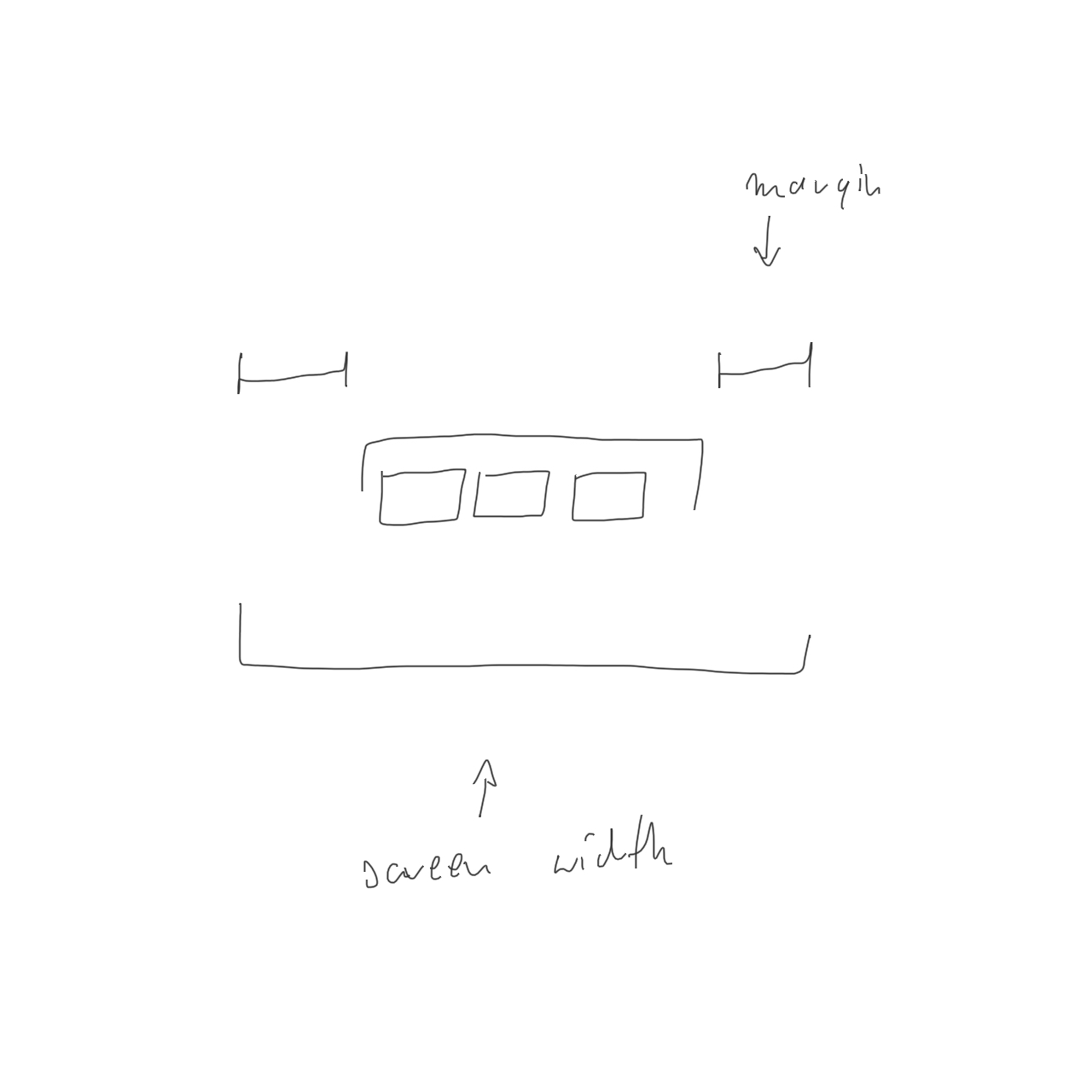

User interface design often starts with a sketch to work out general layout and eventually actual pixel positions of various elements. Perhaps you have a constrained screen size—like this reprogrammable audio effect box with a small OLED screen—and you want to determine the right sizes and spacing for the three UI tab indicators at the bottom:

OLED screen interface with UI tabs represented by the three rectangles at the bottom.

You could start with a quick sketch of the basic layout:

A quick sketch of the basic layout of the interface.

The upper lines represent margins, the middle bracket is the width of the tab section, the bottom bracket represents the total screen width, and the boxes are tabs.

You could add dynamic layout constraints by connecting the margins to the screen width to make them equal and keep the tab area in the middle of the screen, and size the tabs to match the width of the tab area:

A dynamic sketch that maintains layout constraints as values change.

To explore the model more fluidly you could make a slider to control the margin size dynamically:

Exploring the model using a slider to change one of the values.

You could explore different numbers of tabs without redrawing the sketch:

Exploring different numbers of tabs dynamically.

Finally you could add labels for widths so you can take the values and implement them in your UI:

Homing in on final width values.

Learning and epistemic actions

Sketching can be used to think through and better understand complex concepts. We find ourselves wishing our sketchbook could be used to draw concepts and add dynamic behavior so we can manipulate the concepts—take epistemic actions—to increase our understanding.

Perhaps you are learning the first principles of computation, studying transistors and logic gates, but don’t understand why a NAND gate is important.

You could sketch basic gates, like AND and OR:

Sketching AND and OR gates.

You could bring them to life with dynamic behavior so they can sense inputs and produce correct outputs (see spatial queries discussion for more detail):

AND gate sensing inputs and producing correct outputs.

You learn that a NAND gate is a combination of AND and NOT, but you could construct both yourself to play with them, compare them, and see the internal state to improve your mental model of how they work:

Playing with NAND and AND-NOT gates and comparing outputs.

You learn that NAND gates are significant because all other gates can be built from them, but you want to see this in action yourself. You could construct, for example, an AND gate from two NANDs and test its behavior:

Constructing an AND gate from two NANDs and testing it.

This project was inspired by this logic gates Etoys demo, which allows children to play with simulations of circuits using a bin of reusable components that react to what they can “sense” (what’s visible on the canvas):

“Computer Logic Game” built by Alessandro Warth using Etoys.

Sketchy math

Although math is typically viewed as a tool of precision, people who work with quantities often want to work “sketchily”. When exploring a space of possibilities or explaining a big idea, they want to think quickly and loosely and not get bogged down in unnecessary detail.

There’s a reason so many innovations and discoveries have been based on back-of-the-envelope calculations—paper is great for this mode of thought. We hope it’s possible for computers to expand on paper’s capabilities, speeding the tedious or error-prone parts of mathematical sketching and helping sketchers explore further.

Sketching is also used for teaching, as it aids explanation of complex concepts and allows for the creation of visual aids on the fly as you speak. The low fidelity of sketching is key. It allows focus to remain on the big picture concepts rather than the details, as well as creation of bespoke visual aids tightly fitted to the audience and working at the speed of conversation.

But you often want dynamic behavior—you want to draw a sketch and interact with it or have it react. To do math on sketchy objects, you need “sketchy math”.

Physics

Perhaps a friend is learning basic physics. They’ve learned how velocity and acceleration are defined in terms of derivatives, but they want to better understand the concepts. You could sit down with a tablet and talk it through.

You could draw a graph of a bouncing ball:

Sketching a graph of the path of a bouncing ball.

You could annotate this graph as you explain:

“At this point, the ball is moving up, which we know because this line slopes up. And at this point, the ball is moving down, because the line slopes down.”

Annotating the graph while explaining a concept.

“That means the derivative here will be positive, and the derivative here will be negative. Let’s have a look.”

Converting the graph for input into a derivative function and providing a graph to show the output.

Here you grouped the axes to have them recognized as special axes and straightened out, drew a triangle—a derivative symbol—and it was detected as a triangle and colorized to show it has special behavior, and drew and grouped a new set of axes to hold the output of the derivative operation. The computed derivative of your bouncing ball sketch is now displayed below.

See sections on recognition and microworlds below for more detail on how this was implemented.

“As we expected, the derivative is positive here and negative here.”

Annotating the output graph of the derivative operation.

Shown above is a critical feature: you can continue to use the sketchbook as a sketchbook, without interfering in the computations.

“So that derivative is velocity. If we take the derivative again, we get acceleration…”

Adding a second derivative outputting to a third graph.

These operations are all live and reactive, so you can manipulate or re-sketch the paths and see the results as you draw.

“Let’s draw a more typical path for the ball by erasing our old path and drawing a new one. It looks like the velocity is slowly decreasing, and that gives us a more-or-less constant negative acceleration. Gravity!”

Re-sketching the starting graph and seeing live results as you draw.

Statistics

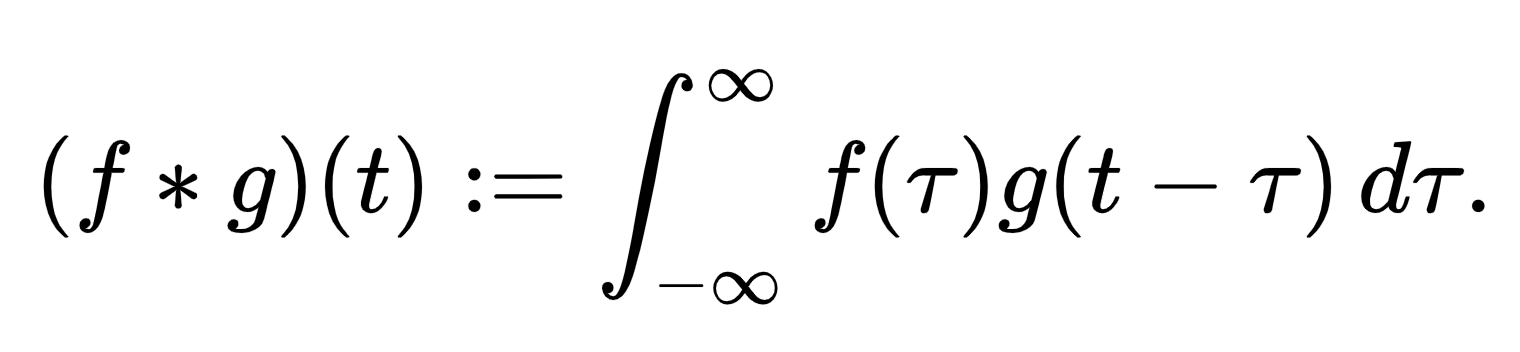

The physics example uses a triangle operator to compute derivatives. The chart prototype was also built with support for a more advanced operation, convolution. Convolution has an intimidating symbolic definition:

But in practice, convolution has an intuitive feeling. Convolution “smears out” one function, using a second function—the kernel—as a template for the smearing. Below, we invoke convolution with a hand-drawn star, then vary the shape of the kernel graph by stretching its width. Watch as the function’s two humps become blended together in the output:

Watching the convolution operation change its output graph as one of the two input graphs is manipulated.

This is an example from statistics. Suppose the distribution of wingspans in a population of birds is bimodal—it has two humps. When we take samples of this distribution, there will be some error inherent in our wingspan measurements, as represented by the bell curve of the kernel. This convolution model demonstrates that if the error level is too high, the two humps will blend into one and the bimodality of the birds will be missed.

In both of these examples—physics and statistics—playing with hand-drawn graphs helps the sketcher explore “what if?” scenarios in their domain, while building intuition for the underlying math. The computer contributes to the sketcher’s exploration by providing instant feedback. A system using the traditional computing approach of asking the user for algebraic expressions for the input curves would be tedious to use and distract from the underlying intuition. A computational sketchbook is a natural setting for this sort of “sketchy” exploration.

Key Ingredients

In research we often have under-constrained design spaces to explore. We sometimes use the ingredients analogy at the lab for thinking about the design of a tool—some ingredients are essential to the nature of the thing (apples for apple pie), some are necessary for it to work (leavening agent for bread), some don’t work well together (lemon juice and milk—unless curdling is intended), some don’t taste good together (garlic and chocolate), and some need to be used judiciously (ginger).

Through our design iterations and use case exploration we found certain ingredients were key to the feel we wanted Inkbase to have, and others were required by some or many of our experiments. Below are three that surfaced repeatedly.

Spatial queries

Working with ink on a canvas naturally encourages thinking spatially: location is intentional for every mark you make, as are the spatial relationships between marks. As a result, querying the canvas for other marks ended up being a core part of almost every model we made, making them a likely key ingredient of Inkbase.

For example, in the habit tracker we define whether a box is marked as whether it contains another mark, as determined by query/inside:

contents:

(query/inside me)

[ obj4 ]

marked:

(not empty? (? me :contents))

true

(query/inside me) to determine if the box is marked

Whether a box is part of a “streak” is more complex—only the marked boxes in the most recent (rightmost) unbroken chain are part of a streak. This can be expressed with some (spatially) recursive logic: I am part of a “streak” if I am marked and either the box to my right (using query/r) is part of a streak, or I am the rightmost box:

stroke:

(if (? me :streak) "red" "black")

"red"

streak:

(and

(? me :marked)

(or

(? (? me :to-right) :streak)

(? me :rightmost)))

true

to-right:

(first (query/r me 100))

obj3marked value with (query/r me 100) and a recursive dependency to determine if part of a streak.

While our spatial query library isn’t exhaustive—we added query functions as needed while using the tool—we did accumulate quite a few functions and helpers. Notably all are implemented in user-space (with the exception of path containment which uses the performant native iOS polygon library).

Our naive queries did run into some challenges with reality, however: natural strokes don’t always align with the logical containers needed for behavior. For example, when marking boxes by hand, you don’t necessarily stay within the lines:

Realistic example of hand-marked boxes.

There isn’t an easy way to determine whether the 2nd box above is checked: (query/inside me) will match nothing because the mark isn’t fully contained inside the box’s polygon, and (query/intersects me) will match the mark both for the 2nd and 3rd boxes because it overlaps both. What we actually want here is fuzzier querying such as finding marks that are mostly or primarily inside a path rather than strictly so (also the “boxes” aren’t always square or even closed paths).

Or imagine you draw a grid the common way by first drawing a large box and then slicing it with a series of vertical and horizontal lines:

Realistic example of drawing a grid of boxes.

How do we query the contents of the 3rd box in the 2nd row? It isn’t an object, but rather a negative space between other marks. We don’t have a convenient way to do the polygon clipping needed to break the resulting grid cells into separate paths to contain their own logic or for use with spatial queries.

Helper functions could make it much more concise to scope spatial queries to relevant objects or groups, or to slice a set of objects by another set—sort of the spatial equivalent of common array operations, but for polygons/paths. As you might split a string by spaces to get an array of words, you might want to split a large containing mark (the outer box above) by a set of small marks (the vertical/horizontal lines). Further work could make spatial queries more powerful.

Another area of interest is providing more visualizations to aid the user in constructing and understanding spatial queries. In the logic gates example, each gate “senses” its inputs and outputs using simple spatial queries:

input1:

(first (query/tl me 100)) ;; top left

obj11

input2:

(first (query/bl me 100)) ;; bottom left

obj6

output:

(first (query/r me 100)) ;; right

obj13

state:

(and

(? (? me :input1) :state)

(? (? me :input2) :state))

true

write-output:

(! (? me :output) :state (? me :state))It then visualizes the results of the spatial queries by drawing lines between the gate and the inputs/output. Because the queries are live and reactive, you can see the inputs/outputs “attaching” to the gate when they get within the query distance:

vis-input1:

(draw/line

(? me :center)

(? (? me :input1) :center))

vis-input2:

(draw/line

(? me :center)

(? (? me :input2) :center))

vis-output:

(draw/line

(? me :center)

(? (? me :output) :center))Visual feedback for spatial relations turned out to be an important part of this experiment—seeing the connections update live helps understand the simulation.

Inkbase programs are able to add shapes to the canvas dynamically, as seen in this example, using a visual style distinct from user-drawn ink. We took to calling this the “computer voice”. One possible direction is to double down on the computer voice, providing default visualizations for all spatial queries and other program information. Another direction is doing the opposite—allowing only user-drawn marks on the page. We played with both approaches in our experiments but don’t yet have an opinion on this.

Recognition

Coming into this project, we had mixed feelings about the role of ink/stroke/shape recognition. On one hand, interesting dynamic behaviors require that the computer have some understanding of the intent of marks, and recognition of inked marks feels like a natural way to interact with a programmed system. If this intent isn’t provided by recognition, it would have to be provided by a non-ink-based interaction—such as manual tagging or duplicating from a template. If it’s a design goal for the sketcher to spend most of their time drawing with a pen, recognition feels necessary.

On the other hand, there are few experiences in computing more frustrating than trying to be understood by an opaque system that claims to be “smart”. Mistakes in recognition—which will, realistically, be quite common—leave the user feeling powerless and mystified. This is directly opposed to our design principles: users should have total control over and visibility into their working environment.

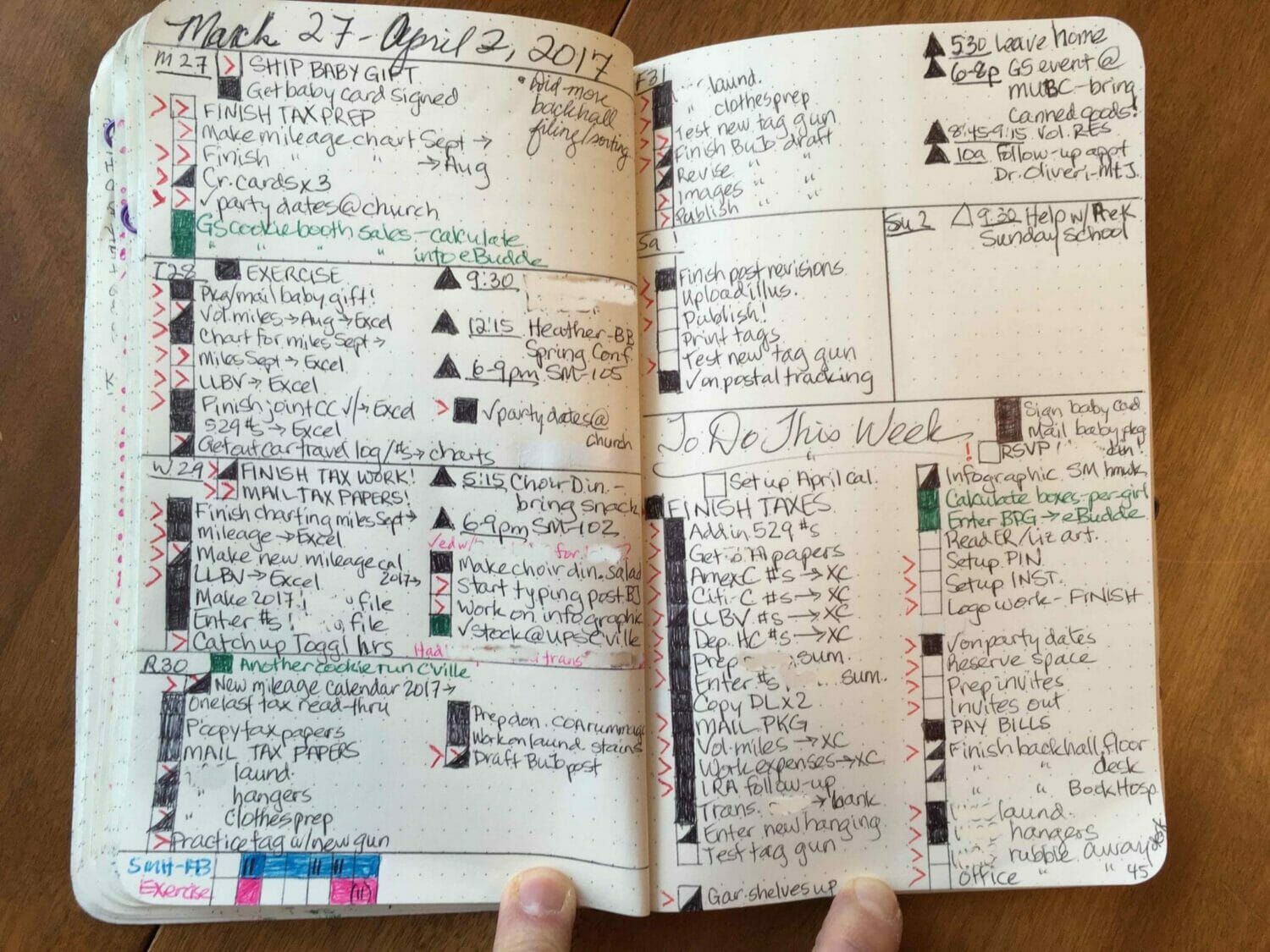

This tension was apparent in our use cases. For example, in real-world use of Inkbase, the habit tracker wouldn’t be programmed onto a blank canvas, but rather added over time as gradual enrichments to a pre-existing tracker you’ve been using for a while. This means implementing it over an existing page that’s already partially filled out:

Example of real-world bullet journal from Super Mom Hacks.

Imagine wanting to add dynamic behavior to this page at this point. You would need to be able to differentiate, for example, between the marks making the boxes and the marks filling them in. Shape recognition is probably necessary to handle this realistic case, but we aren’t sure how to resolve this tension between recognition and having a completely reliable tool with maximum user agency.

Perhaps selecting and then grouping or layering would allow us to separate these marks, but it’s hard to do post hoc (whether done by the user manually or by the system automatically—even more challenging), and grouping or layering adds undesirable interface chrome and modes. This approach also wouldn’t allow the dynamic behavior to be automatically applied to new boxes you draw subsequently, whereas recognition would.

We also need to differentiate between strokes with different meanings—perhaps you want to make different marks in different boxes and do different things depending on the marks. See the photo above: some boxes have a > or X in them or next to them, some are filled in fully or partially diagonally, and some have a combination of the two.

We currently support basic shape recognition that would allow us, for example, to detect and ignore vertical lines as part of the background, or we could recognize a specific mark for filling boxes in (say a single-stroke or two-stroke “X”, though recognizing multi-stroke shapes isn’t currently supported), but these would need more work to be reliable and ergonomic to use in this way. We are generally skeptical that this can be made reliable.

Another example is from sketchy math, which leans more heavily on recognition than some of our other examples.

Sketchy math example using the $1 Unistroke Recognizer to identify a triangle.

Specifically, there are two instances of recognition:

- When a pair of axes are drawn and then grouped, the computer recognizes them as axes.

- When any shape is drawn that looks sufficiently like a triangle or star, the computer recognizes it as a derivative or convolution operator.

Behind the scenes, these use two different methods:

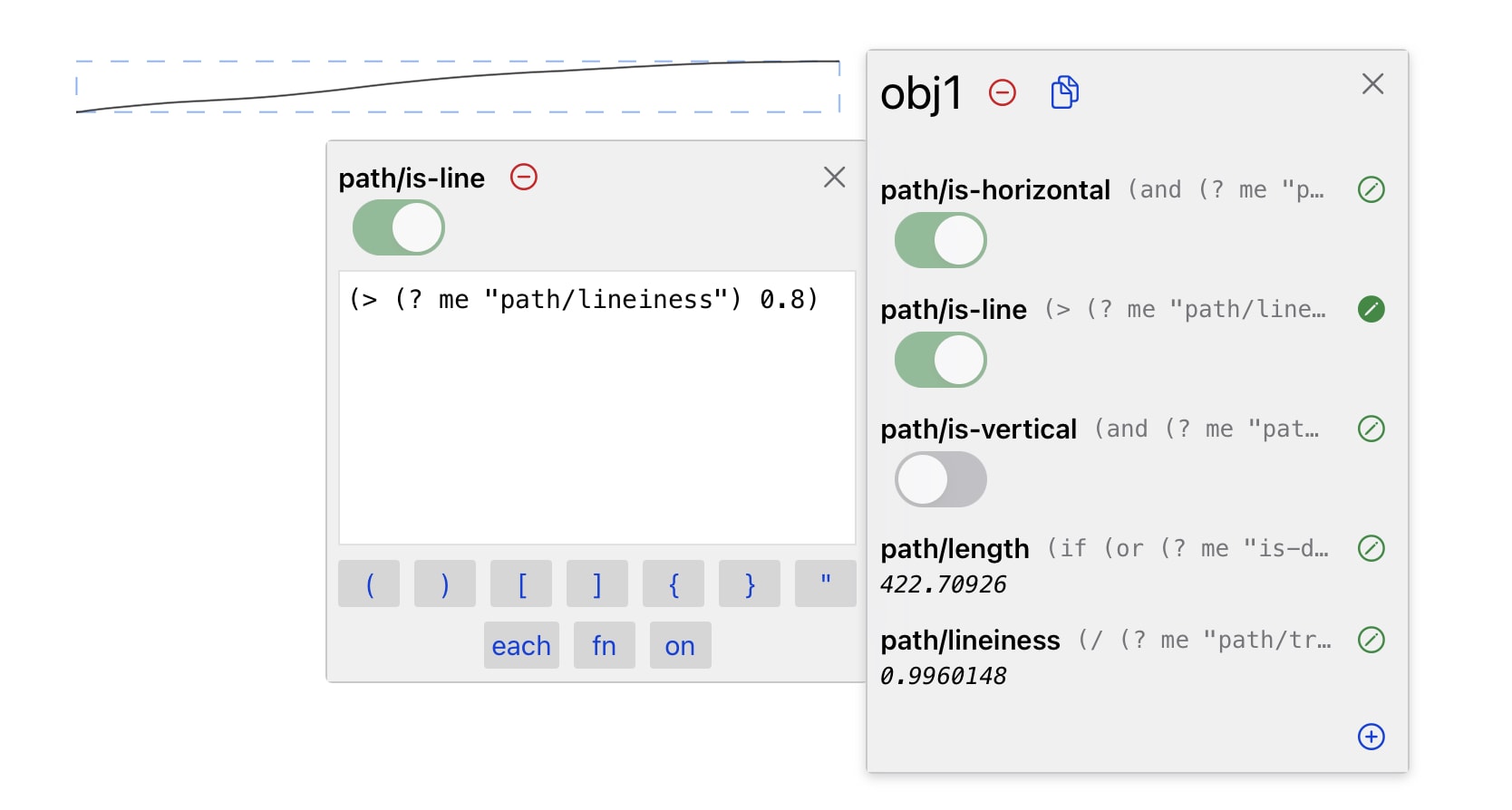

Detecting axes works according to simple, bottom-up, purpose-specific heuristics: if a mark is sufficiently straight (

lineiness > 0.8), it is recognized as a line. If a mark recognized as a line is sufficiently horizontal/vertical (-15º < angle < 15ºor75º < angle < 105º), it is recognized as a horizontal/vertical line. If a group is created consisting of one horizontal line and one vertical line, it is recognized as a graph.

Example of a mark recognized as a line (

lineiness > 0.8) and as horizontal (75º < angle < 105º).Detecting triangles and stars uses a generic stroke recognition system, the $1 Unistroke Recognizer. This works by comparing drawn marks to template shapes.

Triangles and stars must be drawn in a very specific way to be recognized, which illustrates the reliability challenges with shape recognition. This works well for a demo in which the presenter is practiced in drawing triangles and stars to the recognizer’s liking, but doesn’t lend itself well to organic use. Factors which might reduce this tension:

- Bottom-up, purpose-specific heuristics provide greater visibility into recognition and greater moldability. If a pair of axes fails to be recognized, the sketcher can determine why. Maybe they'll discover the x-axis wasn't recognized as horizontal because it was too tilted. In response, the sketcher can change the way they draw their axes, or change the code to accommodate their own style. This is possible because the recognizer lives in an open programming environment with the sketcher, rather than an inaccessible black box.

- Implicit recognition combined with explicit tagging can be a friendly compromise. For instance, the charts system does not try to recognize every pair of lines as a pair of axes. If it did, either every drawn "t" would have a chance of being detected as a pair of axes, or the axis-detection heuristics would become so complex as to be unworkable. Instead, in our system the sketcher invites the computer to recognize the diagram by explicitly grouping it, rather than letting the recognizer run continuously and indiscriminately over the whole canvas.

- Despite their disadvantages, "black box" algorithms like the $1 Recognizer can be made friendlier. For instance: if the sketcher draws what they think is a star, and the recognizer doesn't recognize it as such, the sketcher should be able to label their mark as a star, adding it to the recognizer's database of known shapes. The next time they draw a star, it is likely to be similar to the last star they drew, and more likely to be recognized. This reflects an advantage of end-user programming: you don't need to teach the computer how to deal with the idiosyncratic styles of every possible user. You just need to teach it how to deal with you, molding it as you go.

Microworlds

Most of our examples were built entirely on the iPad, using Inkbase’s interface. Sketchy math was not. Much of the code that runs it—parsing ink-drawn paths and computing derivatives and convolutions—was written on a laptop in a conventional text editor and then merged into the Inkbase code.

This certainly signals limitations of Inkbase. Building larger, more technical software systems in Inkbase becomes extremely difficult for many reasons, from the poor ergonomics of typing with an on-screen keyboard, to the absence of important programming constructs needed to organize larger-scale systems.

But these limitations are not so damning, and the story of the chart system actually illustrates an important use case for Inkbase: that of the microworld, where powerful building blocks are available to be combined in unanticipated ways.

The charts system is a microworld, in that it gives the sketcher access to charts and operations that transform them, and they can combine these building blocks to explore many different domains and contexts. Perhaps it’s acceptable for some building blocks to be made outside of the system if they can be creatively used within it.

This pattern of use is reminiscent of Chalktalk, where objects called “sketches”, defined with JavaScript code, are invoked by drawing with the stylus and then connected together to create new behaviors.

At first glance, the pattern of pre-built microworlds seems like it stands in contrast with programming in the moment, but we think the two can be combined in interesting ways: in Inkbase, the pre-built microworlds are used inside of an environment that is itself a fully programmable system. That means you are not limited to using the components of the microworld. Using the spreadsheet-like reactive substrate, you can connect them to the larger world outside the microworld, or even to other microworlds.

One small example:

Combining end-user programmed behavior with the sketchy math microworld components.

Here we spin a segment around and compute its intersection with a charmingly hand-made circle. As it moves, the y-coordinate of this intersection is plotted on a graph below (as a function of the segment’s angle), and the derivative of this plot is computed. As trigonometry students might expect, the y-coordinate makes a sine curve and its derivative makes a cosine curve.

To make this happen, the sketcher wrote a few lines of code to compute the intersection and feed it into a chart. Then the chart microworld sprung into action, and did its derivative magic. Multiple modalities of computational sketchbook use can be combined, because they share an underlying communications layer—the reactive spreadsheet.

Findings and open questions

Working with the material

The broad concept of working with the material gestures at a different way of using computers that feels more like working with your hands in the real world and less like poking at a model through a glass pane. Interfaces encouraging the user to play with on-screen content seem to flow easily and naturally into progressive refinement and programming in the moment, which makes this way of working compelling.

This sense of intimacy and working with the material could be enhanced by a number of improvements to the tool, especially around making your work always feel safe (such as unlimited undo) and making as much of the program as possible be visible on the canvas and manipulable with the same hand-tools as ink.

Hand-drawn reactivity feels great & new

A central goal of the project was to see what it feels like to make hand-drawn marks, enhance them with dynamic functionality, and interact with them. Playing with Inkbase and the various demos we built felt unique among our experiences with computing media. Using a pen to interact with a lively reactive system is exciting. Even on its own this encourages us to keep investing in this and related lines of research.

Dynamic annotations can be meaningful

Working with Inkbase, we felt hints of how small amounts of dynamism—even just annotations or bookkeeping aids—could aid our thinking, without interfering with our sense of working with the material.

We believe this is an area with large potential to make thinking tools more valuable. In the pursuit of personal agency in the use of tools—the tool being guided by the user rather than the user being guided by the tool—there is an important distinction between providing helpful information versus just doing the work for you. In the habit tracker example, annotations provide a clearer picture but the user still marks boxes and makes decisions—as opposed to a system that automatically queries your calendar and produces a visualization of your habits.

An inspiring project on this front is INK-12, which explores how the combination of digital tools and freehand drawing can support teaching and learning mathematics in upper elementary school. In the example below, a student visualizes a multiplication problem as an array, and can use a digital tool to slice the array into smaller chunks that are easier to multiply. The computer shows the sizes of the arrays and ensures they always sum to the original whole, but it’s the student who decides where to cut them, moves them around on the canvas, and ultimately still does the math.

INK-12 investigates learning mathematics through a combination of drawing and representational tools with dynamic assistance from the computer. Similar to our mental bookkeeping example above, the computer can provide helpful annotations and check the math while still allowing freedom of thought through freehand sketching. Several interesting examples are shown here.

Grouping/compound objects needs work

We consistently found a need to group multiple strokes into a compound object in order to have useful spatial query results or computed attributes—getting the width of a group of marks, for example. We also wanted to use grouping for logical containers or encapsulation of behavior, like when a large set of marks represents a single todo item.

We designed interface affordances for grouping and prototyped methods for working with collections of marks concisely but didn’t arrive at an acceptable solution. Part of this is a programming model issue, where working with a group or collection requires mapping or iterating through them, a set of operations which felt increasingly like it deserved a specialized interface.

Our experience working with Inkbase indicates significant investment is needed in working with groups/collections for a tool like this to be truly useful.

Programming model

The programming model is the least solved part of Inkbase. The ergonomics of programming in it are clearly terrible: heavy text editing on a tablet is bad, even worse if you’re editing Lisp, inspector panes are cluttered, and much of the program is hidden from normal canvas view. We believe that a wholly different way of thinking about and expressing the “program” is what’s called for, as opposed to trying to make a more ergonomic program editor for the iPad.

Good tools for thought are as much about helping you manage your headspace as anything else, and adding dynamic behavior just as needed allows you to stay in your current headspace rather than switching to a programming one. We’ve been convinced programming in the moment is the right direction for tools for thought since long before we started this project. However, Inkbase showed us both some specific results we’d like to emphasize in future tool design and some acute tensions that need further study:

Toolkits as a byproduct are great

We found it easy with Inkbase to build a reusable toolkit as a byproduct of working on the problem at hand. We might throw together a slider to scrub a value in a model or an operator to center an object between two input marks. We would then use that mini-tool again later on a different model by duplicating it to another page and swapping out its inputs/outputs with a simple insertion of a property using the ? or ! shortcut buttons. This pattern is not only satisfying, but also productive in the amount of saved time and mental focus.

Shared behavior & reusability needs work

While it was easy to re-use a toolkit by rudimentary duplication, there isn’t a correct-feeling method for sophisticated re-use like sharing behavior between many objects. Exploring a formal system for encapsulation and re-use (i.e. prototypes, class/instance, mixins, particles vs fields, etc.) is a rich area for future work.

Creation of new objects reactively is unsolved

Creating new marks/objects reactively doesn’t easily fit the current programming model, and we struggled to find a coherent design for it. Imperative object creation is one possible direction, but doesn’t feel correct in this model, which wants to be declarative and reactive. Other areas worth exploring are creation of objects via spreads à la Apparatus, or other means by which objects can be created indirectly by manipulating a group or collection, such as a shape whose area is automatically filled with smaller shapes.

Conclusion

We opened with the question “what would be possible if hand-drawn sketches were programmable like spreadsheets?” We still don’t know the answer, but we found interacting with Inkbase to be extremely compelling—even with its limitations. Moving from sketching by hand to adding dynamic functionality, all within the same environment, is an exciting way of working we haven’t experienced elsewhere.

There are many areas enumerated above that need improvement or deeper research in order to move toward an actually usable tool like this, the largest of which revolve around the fundamental programming model. We hope to explore other approaches to the programming model in future projects.

We welcome your feedback: @inkandswitch or hello@inkandswitch.com.

Thanks to all who gave feedback and guidance throughout the project: Marcel Goethals, Peter van Hardenberg, Geoffrey Litt, Ivan Reese, Toby Schachman, Alex Warth, and Adam Wiggins.