Motivation and overview

Creative professionals and the two-step process for developing ideas

Leading up to this project, our team interviewed dozens of creative professionals such as professors, authors, film-makers, and web designers. Although the details of how these pros work are different, a pattern emerged in this research: they all live or die by the strength of their ideas.

But ideas aren’t summoned from nowhere: they come from raw material, other ideas or observations about the world. Hence a two-step creative process: collect raw material, then think about it. From this process comes pattern recognition and eventually the insights that form the basis of novel ideas.

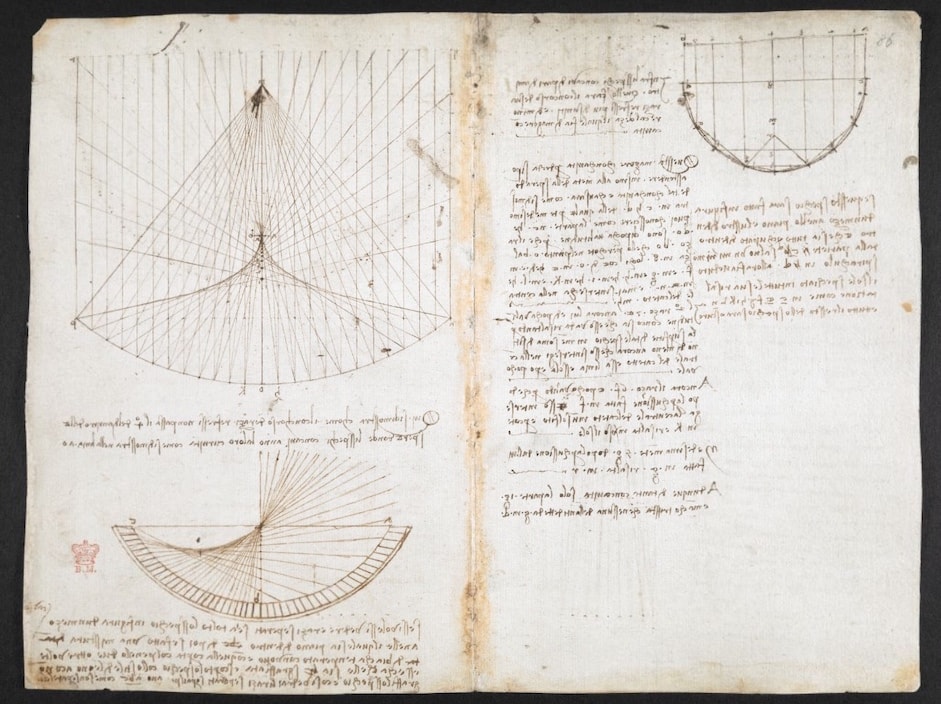

Leonardo da Vinci is the quintessential collect-then-think creative professional. His notebooks include observations from the natural world, excerpts from books he has read; then he develops his own ideas in written or sketched form. See Leonardo da Vinci by Walter

The collection step is gathering raw material in the form of sketches, photos, websites, text excerpts, and manuscripts in a single location. This can happen all at once in a binge-research session or it can be a slow curation over months or years.

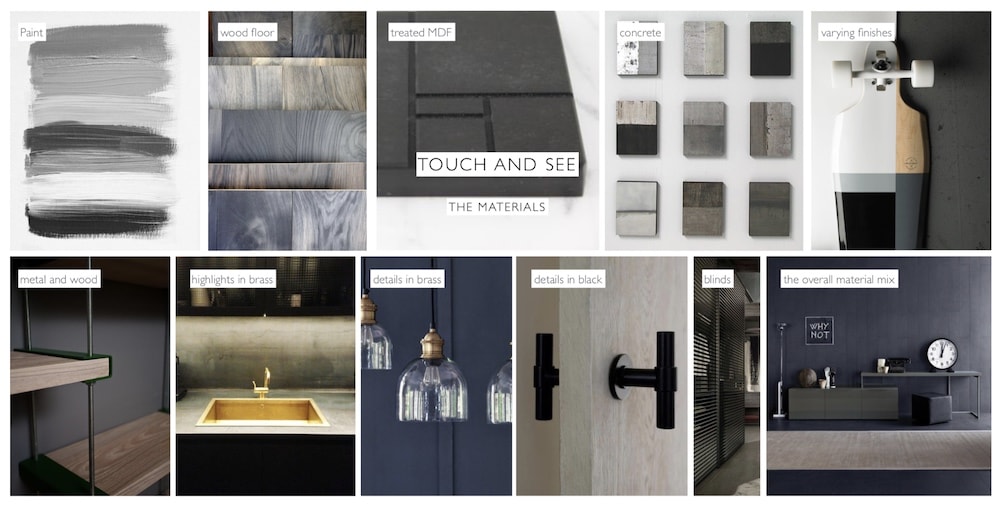

Interior architecture firm

In the thinking step, the creative pro sifts and sorts their collected raw material. They look at it from different angles, rearrange, try to get new perspectives. They are looking for patterns that can lead to fresh insights.

YouTube video essayist and Mark

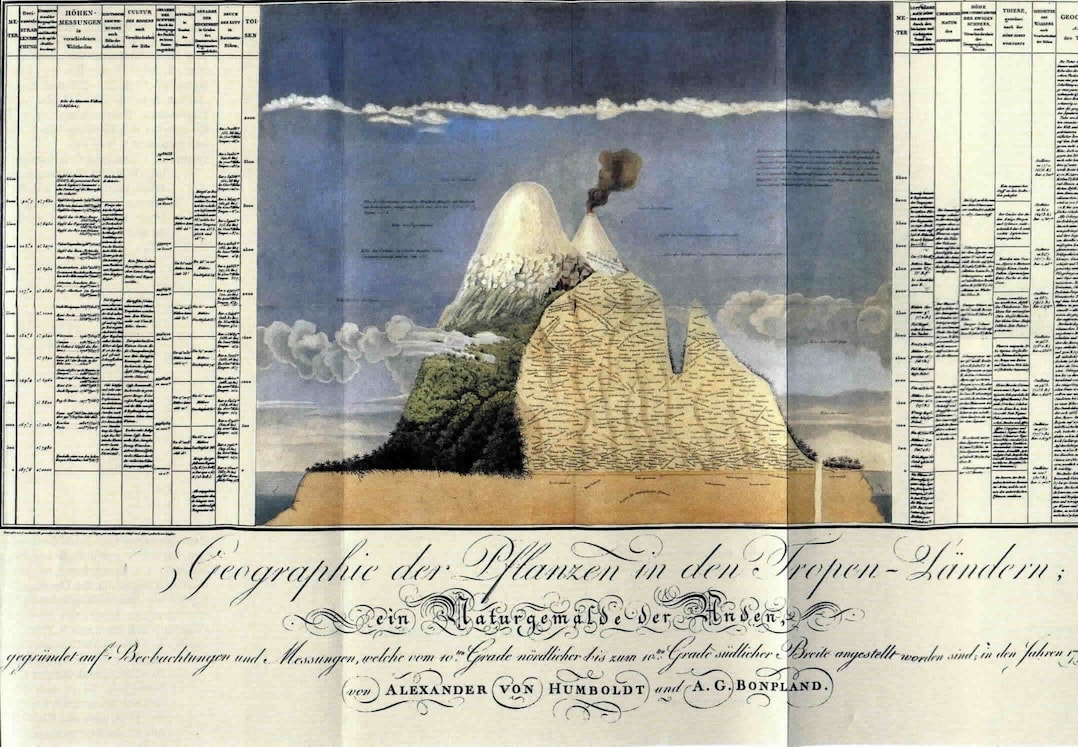

Alexander von

Next, we investigate the tools used by creative professionals in this collect-then-think process.

Existing tools for collecting and thinking

Paper notebook

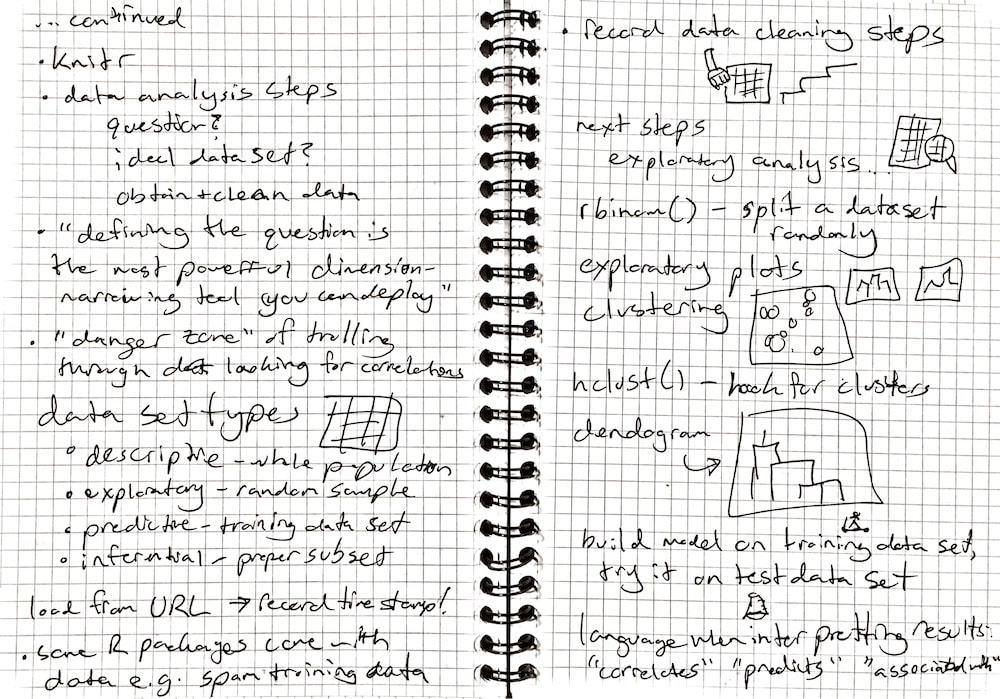

The notebook and pen or pencil is a classic and beloved tool for creative people. Notebooks are sketchy and freeform. Turning over a new page in a notebook to capture a fresh thought is fast and easy. And notebooks are portable but also offer a comfortable posture for thinking.

Handwritten lecture notes from a data science course.

Notebooks can be a blank canvas for recording observations and ideas, but they can also be a collection space: for lecture or meeting notes, excerpts from other works such as in a commonplace

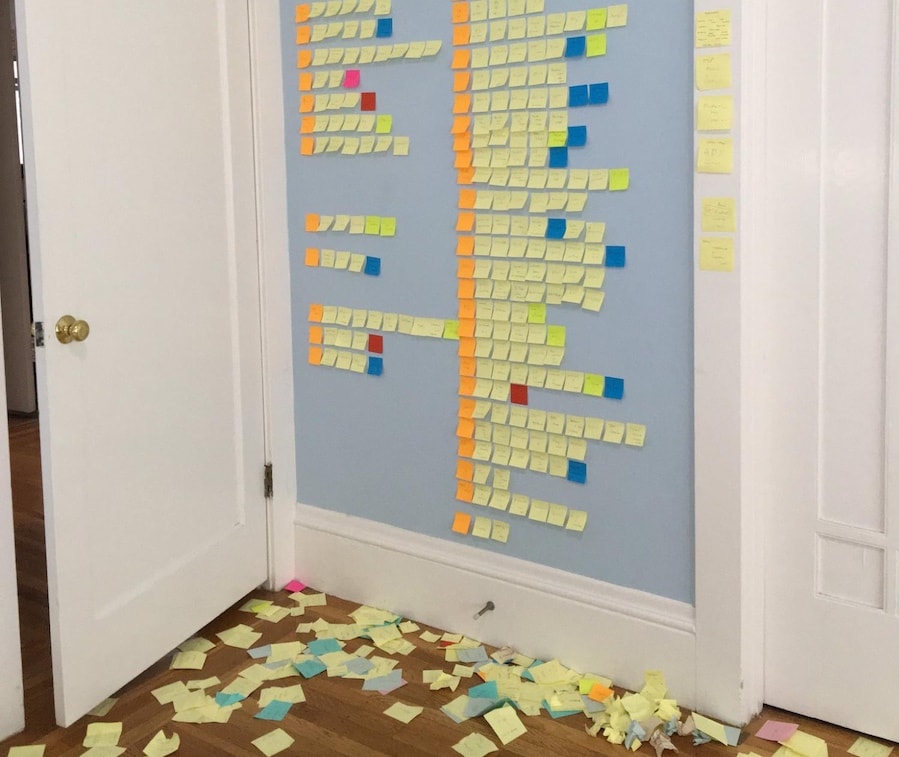

Post-its and war rooms

Marcin

Post-it notes on a wall, index cards on a desk or pinboard, and war

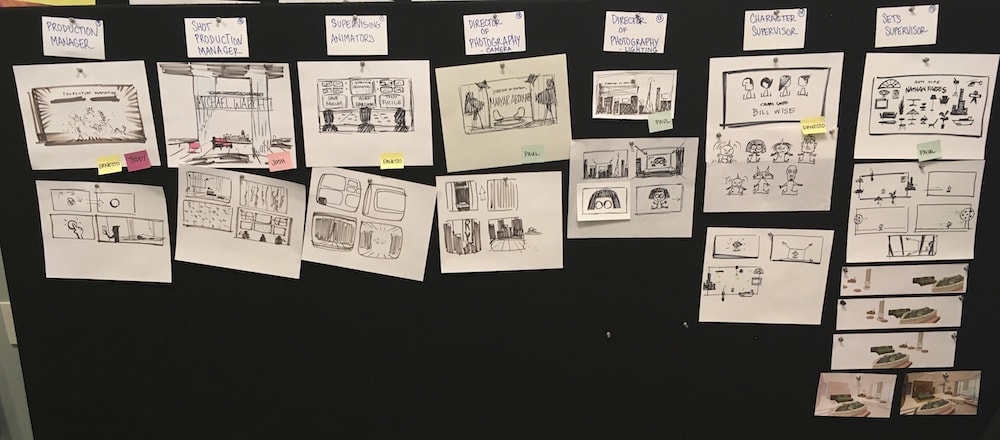

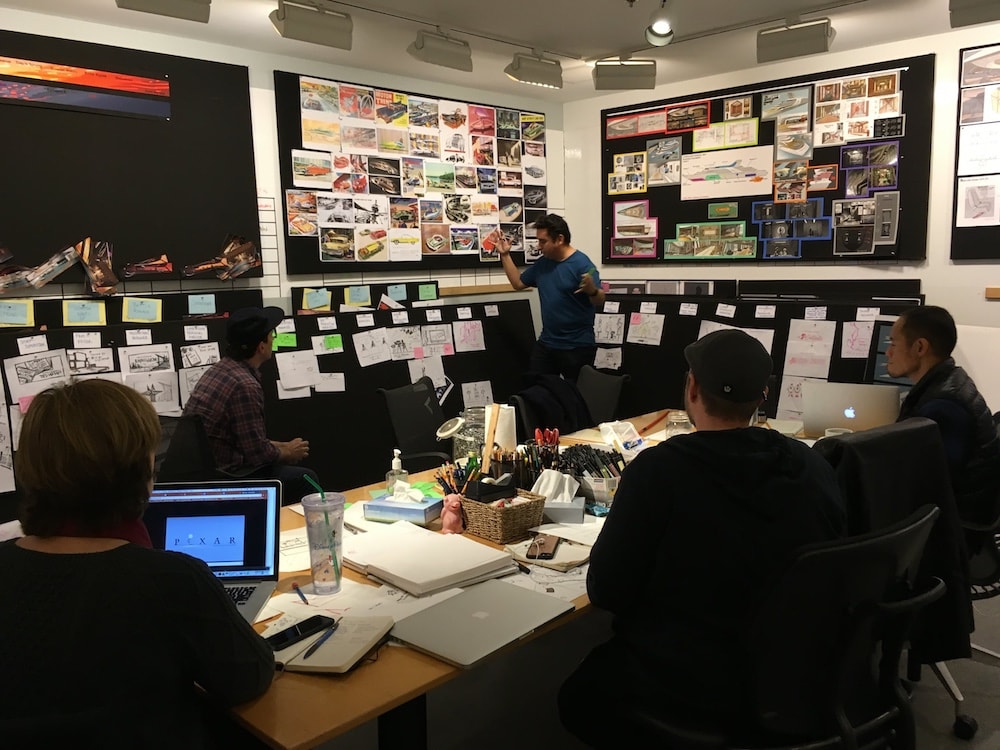

Pinboards used in an art room at

From migratory birds to open-sea navigation by pre-civilization

Pinboards, chalkboards, and war rooms tap into our innate capabilities for spatial reasoning — and have the additional benefit of being a way for small groups to collaborate on collect-then-think.

Note-taking apps

Almost all creative professionals use a smartphone-based note-taking app for quick capture of thoughts on the go. Some examples include Evernote, iOS Notes, Google Keep, Drafts, Bear, iA Writer.

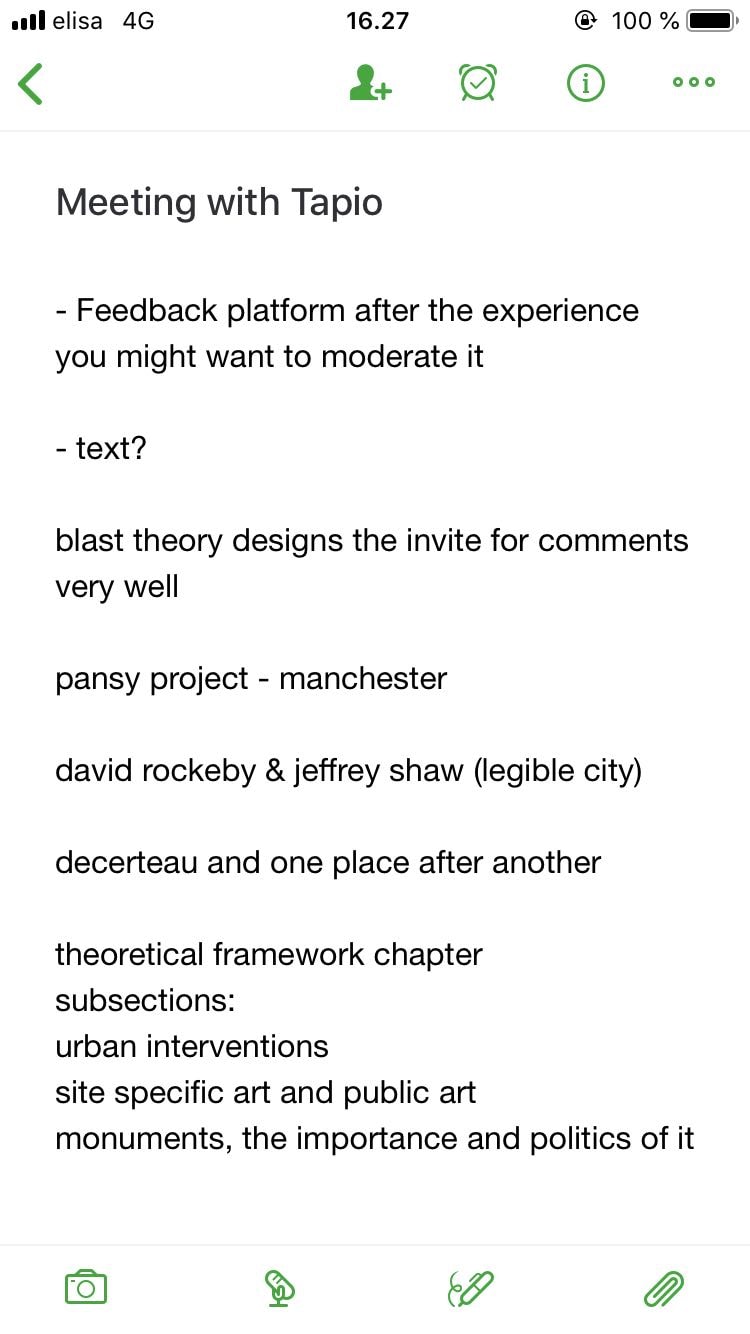

Meeting notes in the Evernote smartphone app.

These tools are great for mobility and sketches in pure text (such as an outline or first draft of an email, paper, or article). But their use is narrow as they are poor at images, web, and other media types.

Virtual pinboards

Virtual pinboards like

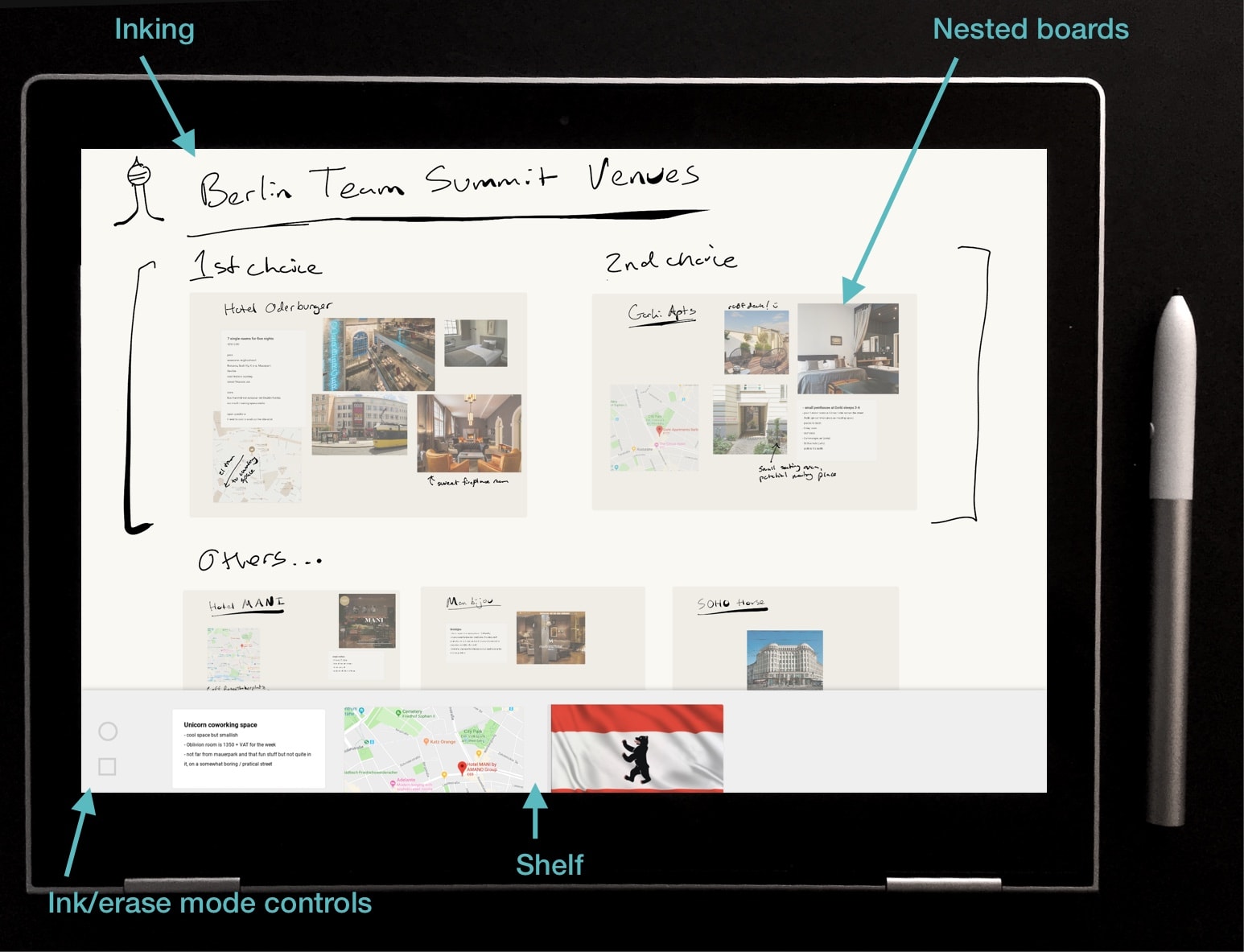

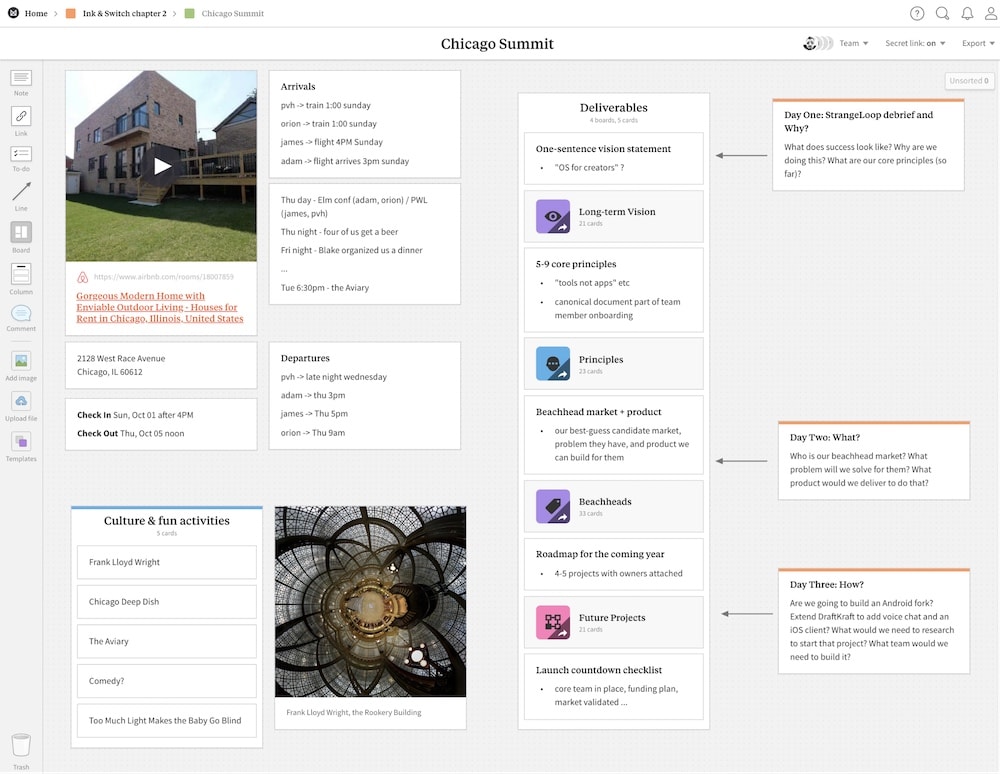

A team summit planning document in the Milanote collaborative pinboard.

Other pinboard-like digital tools include

Although most of these remain niche products that have not found mainstream usage with creative professionals, we think they show promise as a way to bring the best parts of the analog post-its/war-room approach into the digital realm.

Filesystems

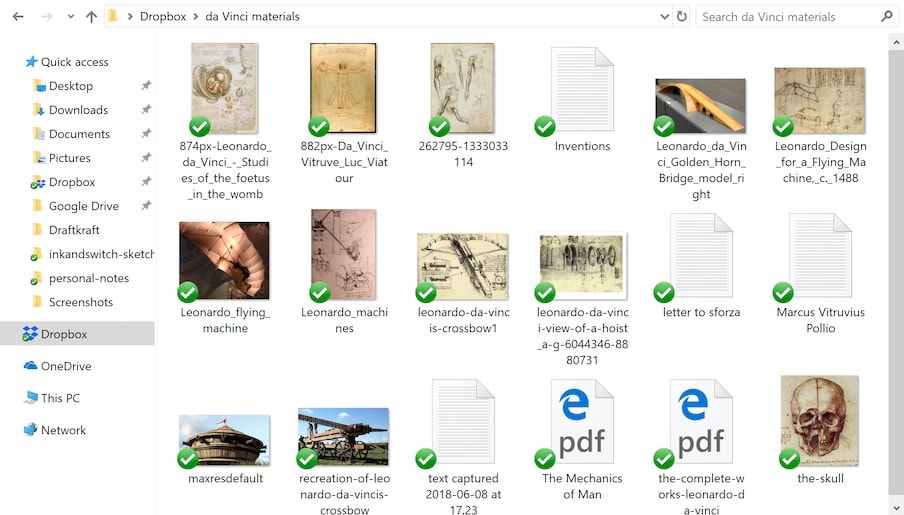

A venerable approach to colllecting raw material is simply to save it to a hard drive and browse with the operating system-provided file browser.

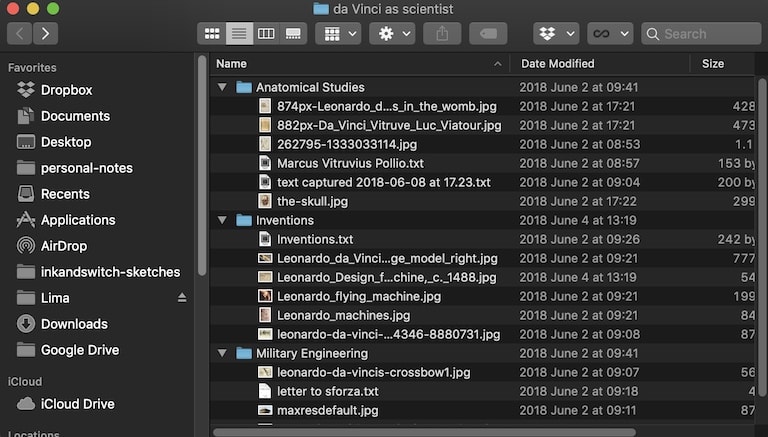

A collection of research on Leonardo da Vinci stored on a hard drive.

Related and adjascent tools include Dropbox and Network Attached Storage devices. File formats like .txt and

But files also tend to be clumsy and old-school, poorly adapted for a multimedia-, web-, and mobile-oriented world.

Digital sketchbooks

Digital sketchbooks and annotation tools with first-class stylus support include

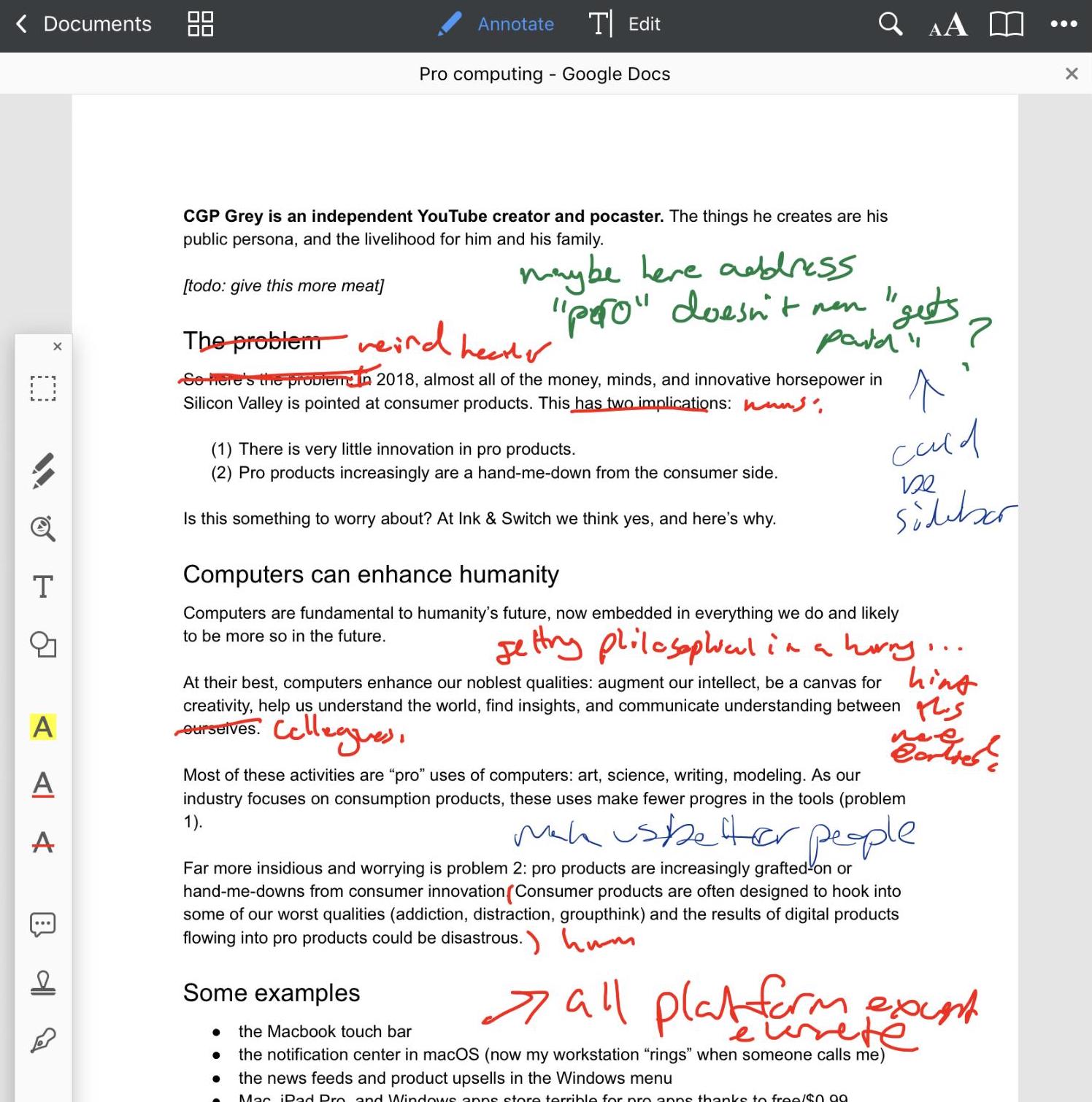

Excerpt from the author’s personal annotations in PDFExpert.

Excerpt from Martin

These have found some traction with the pros we’ve interviewed. The form factor suggests the analog equivalent of notebook and pen, and freeform scribbling is conducive to the creative process. But these apps usually optimize for drawing on a blank canvas or annotating a single image or PDF.

Project vision

We started with the observation that creative professionals develop their ideas in a two-step process. We saw that they get great value from tools that support that process. In surveying those tools, we we were inspired by capabilities like freeform sketching and spatial navigation, but also saw many gaps such as lack of mixed media.

Putting all of these ideas together, we set out to build Capstone: a digital tool for the collect-then-think process of developing ideas.

Capstone’s vision comes in three three main pillars:

- A digital-information collection tool. Pros need a place to collect many types of digital media: the raw material for their thinking process. Our focus for this project is collection done on a desktop computer’s web browser.

- A place to sift, sort, find patterns and insights. Once the raw material is collected, the user should be able to sift and sort through it in a freeform way. All media needs to be represented in the same spatially-organized environment.

- Use a tablet and stylus to its full potential. Pen-and-paper solutions, especially the A4 notebook, seem to be more conducive to thinking and having better ideas. Our hypothesis is that the thinking step should happen on a tablet with freeform inking via the stylus.

Demo video

Here is a full walkthrough of the resulting application, showing a personal use-case from one of our team members making a life decision about a move to a new city.

In the example, the user wants to record the details of his experience into an archive. That archive will be (1) a place for him to sift, think, and find insights that might guide his decision about whether Austin is a good place for him to live and work longer term, and (2) a visual aid for him to use when explaining to colleagues, friends, or family about his time in the city.

While the use case as shown in the video is simple, we hope you can extrapolate to what a more mature version of this tool might enable.

What we built

With the project vision established, this next section describes what we built and why.

Briefly, the components are:

- Capstone Clipper browser extension to collect text, images, and websites on a desktop computer

- The shelf as a portal to transmit collected material to the Capstone tablet

- Tablet thinking space with mixed media cards and inking

- Spatial navigation with zoomable boards

- No titles on items

- The shelf as a re-imagined copy-paste buffer

- Card duplication with mirror

- Command paradigm of hands are safe, stylus edits

- Use the edges of the tablet to create and delete cards

- The unsolved problem of stylus tool selection

Let’s go deeper on each.

The collection step

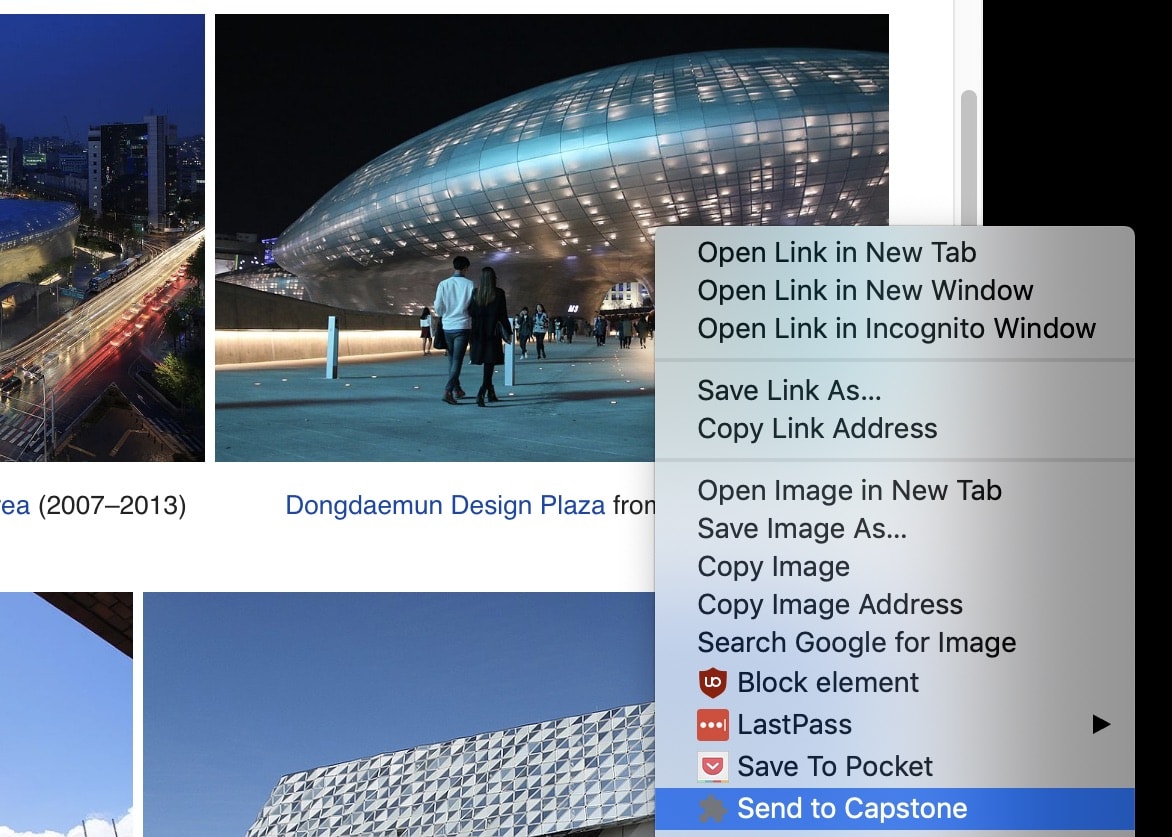

Capstone

Clip images, text, websites

The Capstone clipper can capture media from a desktop computer by dragging in files, pasting from the copy-paste buffer, or capturing directly from the browser.

Capstone users can drag images or paste text on their desktop computer into the shelf.

Right-click context menu offers “Send to Capstone” option for images and text.

Clipped items are saved into the user’s shelf.

Shelf as a portal

The shelf is a freeform space that appears on the user’s desktop and on their tablet. The user can mentally model this as a portal that is always the same between the two devices.

On the desktop, the shelf is a regular window. On the tablet, the shelf is an area in the lower part of the screen which can be summoned by dragging up from the bottom of the screen, and hidden by dragging down.

The thinking step

Now we get to the heart of Capstone: the freeform sift/sort/arrange interface running on the tablet.

Media cards + inking

Capstone’s interface brings together freeform arrangement of media cards (images, text, web clippings, PDFs) and inking (sketches, diagrams, handwritten text, other annotations) in the same space.

Media cards can be arranged freely, like index cards on a table or post-its on a wall. We sought to avoid the precision typically required by digital tools (for example, Illustrator or Keynote) which require the user to work with selections, rotate and resize handles, or front/back arrangement. Instead we wanted immediate interaction moving or resizing of cards in a single gesture.

Inking can happen anywhere. The user can draw and erase on a board and over the top of text, image, and web cards.

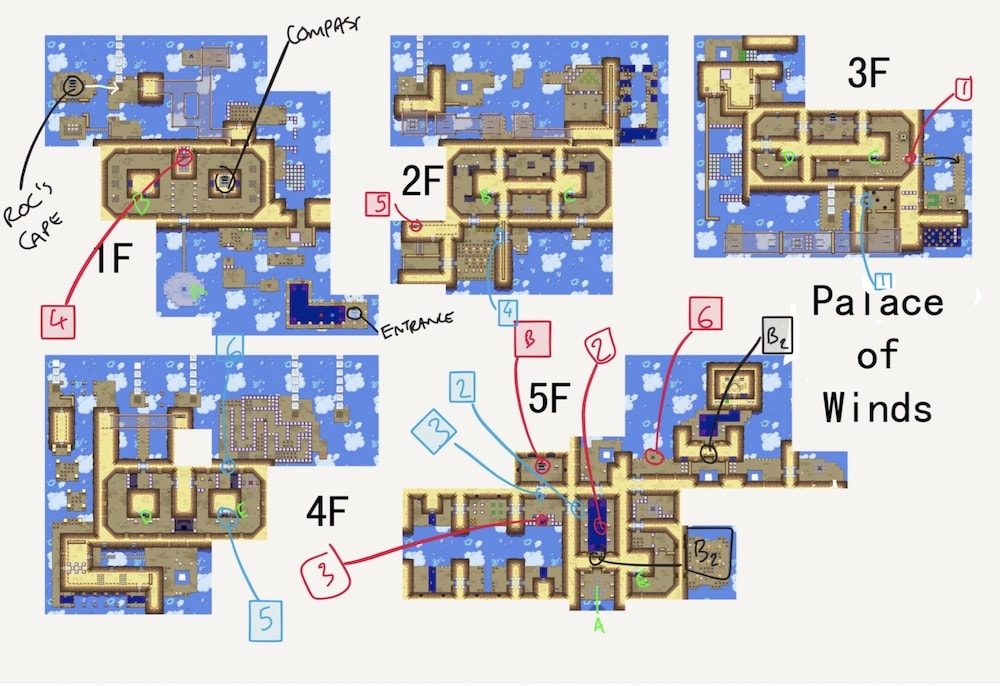

Spatial navigation with zoomable boards

In Capstone, the user organizes their clippings and sketches into nested boards which they can zoom in and out of. Movement is continuous and fluid using pinch in and out gestures.

Our hypothesis was that this would tap into spatial memory and provide a sort of digital memory

Pinch gestures for zooming in and out are familiar from many touchscreen apps, such as Google Maps. We like the association with real-world navigation, and see it as a good example of innovation from the mobile world that can be brought to bear on creative tools.

No titles

Computer applications typically list items by a title, such as a filename. This abstract representation doesn’t match how the document looks (although sometimes it is accompanied by a preview).

The Windows File Explorer emphasizes filename first, with thumbnail previews for only some file types.

The Files app on iPad gives more prominence to the item title (here, the name of each presentation) than the tiny thumbnail preview. Which is the user more likely to remember, the name of the item or how it looks?

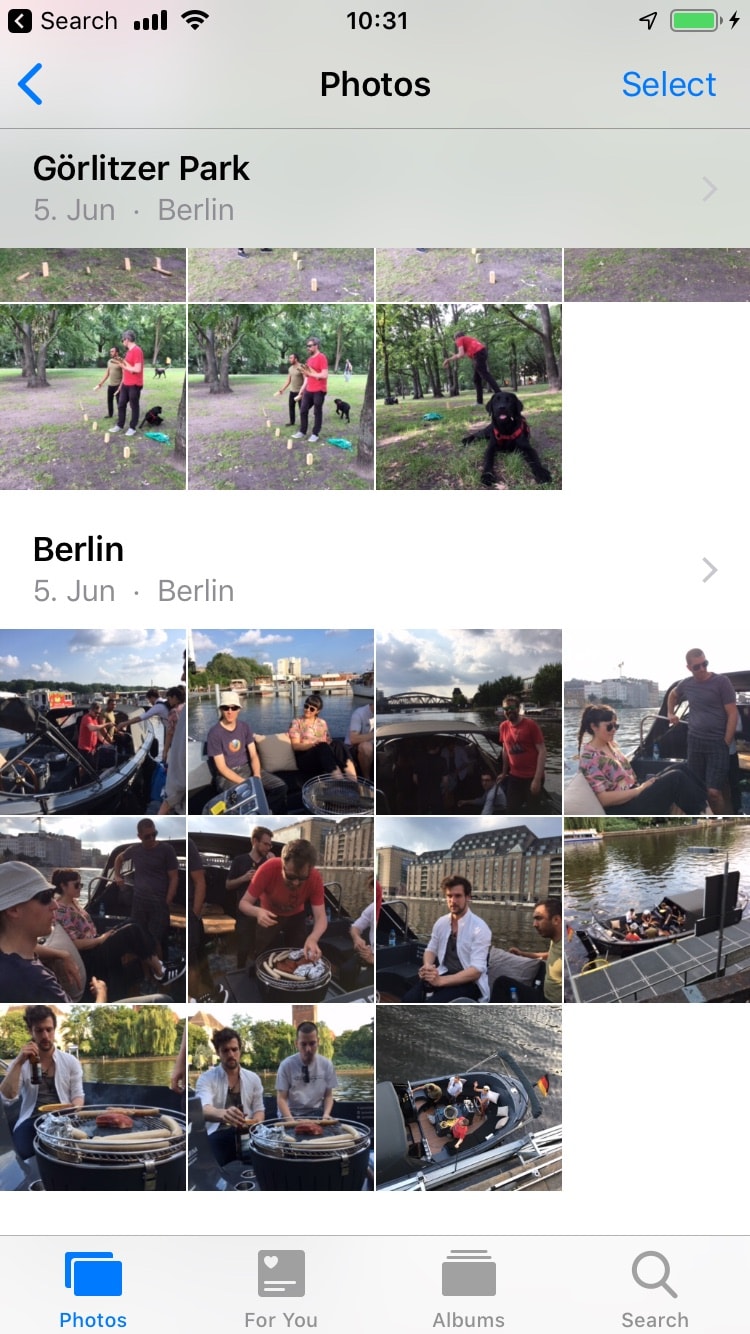

The iOS camera roll offers a more natural way of representing documents: all of the item’s space is given to a small version of the photo. The filename (e.g. “IMG_4879.JPG”) does not appear anywhere.

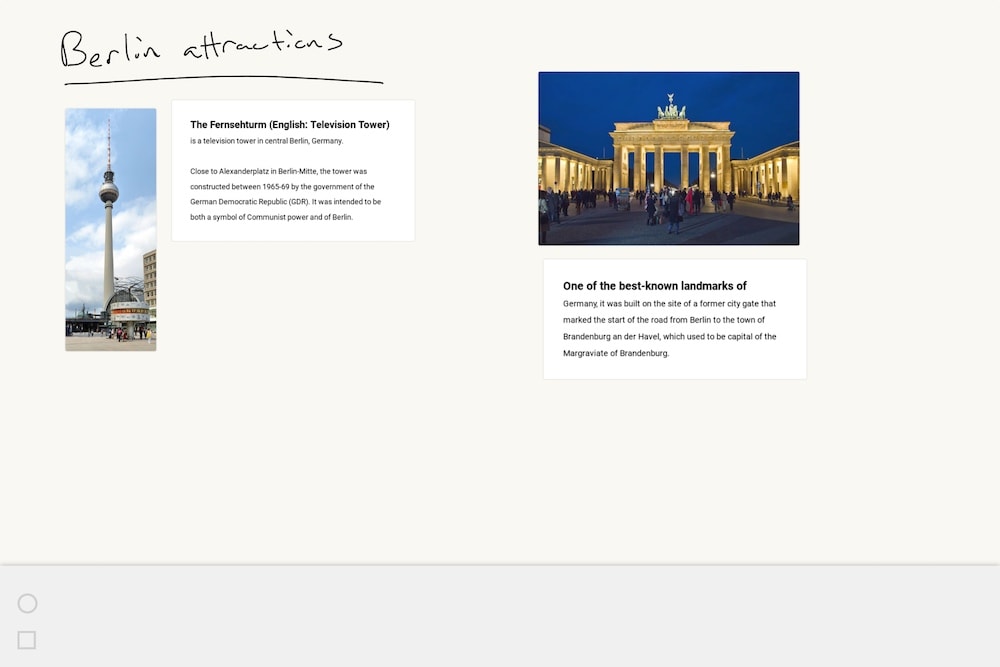

Our idea for Capstone is that representations should look like the document. Some media types, like images and short texts, can display the whole document. Web clips, multi-page PDFs, and longer text excerpts cannot be shown in full, but we we can show a reasonable summary.

In Capstone, documents look exactly like themselves at all times, with no document titles other than what the user may choose to scribble on the boards. The board pictured above has no explicit title, and the image and text cards have no title or filename.

The shelf as reimagined copy-paste

In desktop operating systems, cut-and-paste is a crucial capability. Pro users have ctrl-C/ctrl-V hotkeys in their muscle memory.

But the traditional copy-paste buffer is due for a refresh. It can only hold one thing at a time, it can’t be edited, and it is invisible. On touch devices, copy-paste is typically hidden behind a set of clumsy submenus.

Capstone’s solution is called the shelf. Already described is how the shelf is a portal between the desktop computer and tablet, a sort of private inbox. Once within the sifting/sorting/organizing step, it becomes more: a scratch space and a cozy peripheral space that has the same spatial capabilities of boards but always travels with the user.

The user can move or duplicate cards from any board and put them on the shelf, navigate to another board, and then drop them there. Like traditional copy-and-paste, the shelf is a buffer that stays with the user as they navigate around the app; unlike traditional copy-and-paste, it is manifest on the screen, can hold multiple items at the same time, and can be edited.

Item duplication with mirror

Another important function in power tools is the ability to duplicate an item. Desktop productivity tools often have a “duplicate” or “clone” context menu item, and the user can also copy-paste.

Our approach in Capstone is the mirror command. The user can mirror a card by “pinning” down the card with one finger and dragging out with the stylus:

The finger+stylus hybrid action is an example of what can be done if a tablet app seeks to reach beyond the limitations imposed by the one-handed, small-screen assumptions typically used when designing for touch devices.

Using the tablet and stylus

That covers Capstone’s features supporting the collection and thinking steps of the creative process. Now we’ll address form factor.

Our user research suggests that the A4 notebook shape, especially when paired with a comfortable reading chair or writing desk, is an ideal posture for thinking and developing ideas. That suggests the digital form factor of tablet and stylus.

Existing tablet apps on mobile platforms don’t tend to be very powerful. We speculate this is because they are built as scaled-up smartphone apps, hence they don’t take full advantage of the expressiveness of two hands and a stylus.

Another design approach to tablet apps is to treat them as a port of desktop applications. Attempts to map mouse and keyboard command vocabularies to a touchscreen environment are often clumsy and confusing.

Capstone’s vision is to avoid both of these problems by treating the tablet as distinct from smartphones or desktop computers. Instead, the tablet is a third class of computing device with its own capabilities and place in the user’s life.

Here are three UI approaches we tried in our efforts to make the tablet a device with its own identity.

Hands are safe, stylus edits

The command model for most tablet and phone apps is onscreen chrome (buttons, toolbars, etc) that the user can tap to activate the app’s functions. The stylus is either undifferentiated (treated identically to a finger) or sometimes offers a limited activity such as a separate markup mode.

For Capstone, we tried a different command model: hands are for navigation, the stylus is for editing.

Users are conditioned by ten years of smartphone apps to be able to drag things onscreen with their finger. But in our tests we found that users quickly acclimated to using the stylus for moving and resizing.

We also found that this is an anatomically correct answer: fingers are coarse / non-precise, and the stylus is fine / precise. The stylus provides a higher degree of spatial freedom by expanding our touch zone with smaller arm moves which causes less fatigue, and higher accuracy with its thin tip.

Edges to create and delete cards

An important operation in any thinking tool is creating a new blank space for work. The user wants the equivalent of turning over a new page in a notebook, or pulling a fresh sheet of paper off of a stack.

A common approach to this is a plus button or menu option to create a new item. These are often paired with decisions the user needs to make — is it a text note or a drawing? Is it landscape or portrait orientation? All this ceremony slows down the user in getting straight to recording their ideas.

Deleting or archiving of items is the symmetrical operation: it must be fast and frictionless to dispose of whatever items the user has decided to cull. Ceremony (such as “Are you sure?” confirmation dialogs) and delay (such as long-press to activate a context menu) are antithetical to a speed-of-thought tool.

For Capstone, our approach is to “pull in from edge” to create a new board and “throw off the edge of the world” to delete.

In our testing we found it natural and even fun to both create and delete items. In creating a board, the motion of the user’s hand can be fluidly continued to put the board where they want it. The two operations (create board, place board) the two flow together into one seamless motion.

It was also fun to “flick” the card away as it approaches the screen edge, including deleting several items in a row (flick, flick, flick).

Stylus tool selection

Tool- or mode-switching seems to be necessary for any sufficiently-powerful interface. To avoid the user needing to constantly reposition their hands, input capabilities will need to serve multiple functions. On the tablet, we have an even more restricted input space, so tool-switching is essential.

On Capstone, it was one of our design goals to keep as much of the screen space free of administrative

Here are some of the other tool-switching approaches we experimented with:

- Command

glyphs - A modifier key activated by the non-dominant hand

- Using a second entry tool, like a second stylus or a

dial - Pressing the stylus barrel button

- Using physical buttons on the tablet such as the volume button

- Using stylus pressure sensitivity to switch between modes, as described in Building interactive systems: principles for human-computer

interaction - Obscuring the front-facing camera with the non-dominant thumb, as tried by

Astropad - Knuckle gestures, as seen in Experimental analysis of mode switching techniques in pen-based user

interfaces (video )

We don’t like the onscreen tool palette and consider this a significant unsolved design problem.

Findings

That concludes the description of Capstone’s major design decisions. If you have a Chrome OS tablet, we encourage you to try it for

To bring this manuscript to a close, we’ll share what we observed from testing on our team and with external users.

What worked

Mixed media + sketching

Freely-arrangeable multimedia cards and freeform sketching on the same canvas was a clear winner. Every user immediately understood it and found it enjoyable to use.

Advanced touch UI interactions

The command model of “hands are free, stylus edits” was mostly a success. And hybrid touch-finger interaction like mirror and pulling in cards from the edge of the screen created a fluid and natural environment.

Zoom navigation and “peek”

Nested boards with pinch zoom in/out worked as expected. But an unexpected benefit that emerged in user tests is the idea of a “peek.”

A user can begin a pinch-out gesture to zoom up a board to almost their full tablet screen and read in detail what is on the board. If it’s not what they’re looking for, they can reverse their pinch direction without lifting fingers from the screen to cancel the operation.

Mobile-style motion design for productivity apps

In desktop productivity apps, results from user actions would snap into place onscreen with no motion or transitions. The addition of mobile-style motion design and transitions to Capstone seemed to make the app come alive.

Our observations suggest that these are more than just visual flare: they help create a fluid, environment where the user can move fast and with confidence, because the app visually reinforces every action they take.

Viability of no titles

We used representations of images, text, websites, and boards which look as much as possible like the original content. Boards in particular give you a feel for the colors and shapes they contain, and the ability to read any handwritten large titles. The “peek” capability reinforced this.

Our observation was that a “no titles” approach is indeed viable if there is enough summary information and it is fast and cheap to quickly look more closely. But given the small data sets used in our test cases, further research would be needed to find out if this will work at scale.

Fractal arrangement of ideas

The boards-within-boards navigation metaphor of Capstone encouraged users to organize their thoughts in a hierarchy.

For example, one of our test users developing content for a talk about their charitable organization’s mission created a series of nearly-empty boards with titles. Combined with board previews, this creates an informal table of contents for the ideas the user wanted to explore and could start to fill in.

Chalk-talk use case

The cards plus inking plus zoomable boards lent itself naturally to telling a multimedia story, ala chalk

We speculate that a product-quality version of Capstone could be useful for:

- distributed

teams (screenshare your tablet while talking over video chat) - live or prerecorded educational content as found in

MOOCs such as those onCoursera

Unlike slide decks created with software like PowerPoint or Keynote, these “cards and sketching” presentations felt more lively and human.

What didn’t work

Stylus mode-switching

As documented above, our solution to stylus modes (card editing vs inking) was an onscreen tool palette, which users found clumsy and error-prone.

Complex motion and transitions on web stack

The web stack we were building on made complex transitions challenging. Smooth transitions such as moving in and out so that it felt like one continuous space was a significant technical challenge. Our engineers concluded that the web stack (which is optimized for discrete page navigation) is not ideal for this. A better approach would be more like a video game: continuous rendering of an archive as a single “world.”

Left- vs right-handed use

Most of our tablet UI paradigms depend on knowing which hand is dominant for the user. For example, onscreen mode-switching buttons should be located wherever the user’s non-dominant hand is likely to be located (including gripping the tablet). But that is a different place depending on the users’ handedness.

A left- vs right-handed toggle would probably be the solution for a more complete prototype.

Problems with lost, malfunctioning, or out-of-battery stylus

Making the stylus a required part of a power-tool interface had many benefits, but it also exposed problems we faced throughout the project:

- A stylus that inexplicably ran out of batteries after a week, with no indication as to why it was not being recognized by the tablet

- Varying pressure sensitivity, with some users finding they had to press so hard as to distort the screen

- Frequent lost styluses (“wait, let me find my stylus…” was a constant refrain)

We speculate that, to be viable, a tablet+stylus platform would need to address these. One solution is that if stylus hardware became cheap enough and worked universally, then workplaces and individuals could keep pen-holders full of styluses around, just as today we keep spare pens around.

Screenshot clipping

While the Capstone clipper allows users to capture entire web pages, images, and fragments of text, often users wanted an “excerpt” of a website or PDF with all formatting intact. The user behavior here was to take a screenshot using the “capture region” screenshot capability of their desktop OS.

Screenshots as a way to capture and archive content has many downsides: it’s not searchable, the text is not selectable, it loses quality when sized up, and most of the metadata (e.g. what website it came from) is lost.

And yet, screenshots are easy, reliable, and universal on all computing platforms. Users are naturally drawn to take screenshots of text: what could an application or platform do to lean into this tendency, such as automatic OCR? We think this warrants more investigation.

Conclusion

At Ink & Switch we believe in tools that help humans think, create, and grow in our personal and professional lives.

We feel that computers have significant untapped potential in this domain. But as the technology industry puts ever-more effort into consumer products, we wish there was a similar emphasis on productivity and creativity applications.

Capstone is one experiment with innovation in digital thinking tools. It suggests some promising directions such as mixed media cards and inking on a freeform canvas, spatial navigation, and the tablet as a device with a purpose distinct from phones or desktop computers. That is: a quiet space for ruminating on raw material and finding insights to guide career or life decisions.

If you're a developer of iPad or Surface applications, desktop productivity software, or a human-computer interaction researcher, we hope you find some inspiration from Capstone. We'd love to hear your thoughts:

Appendices

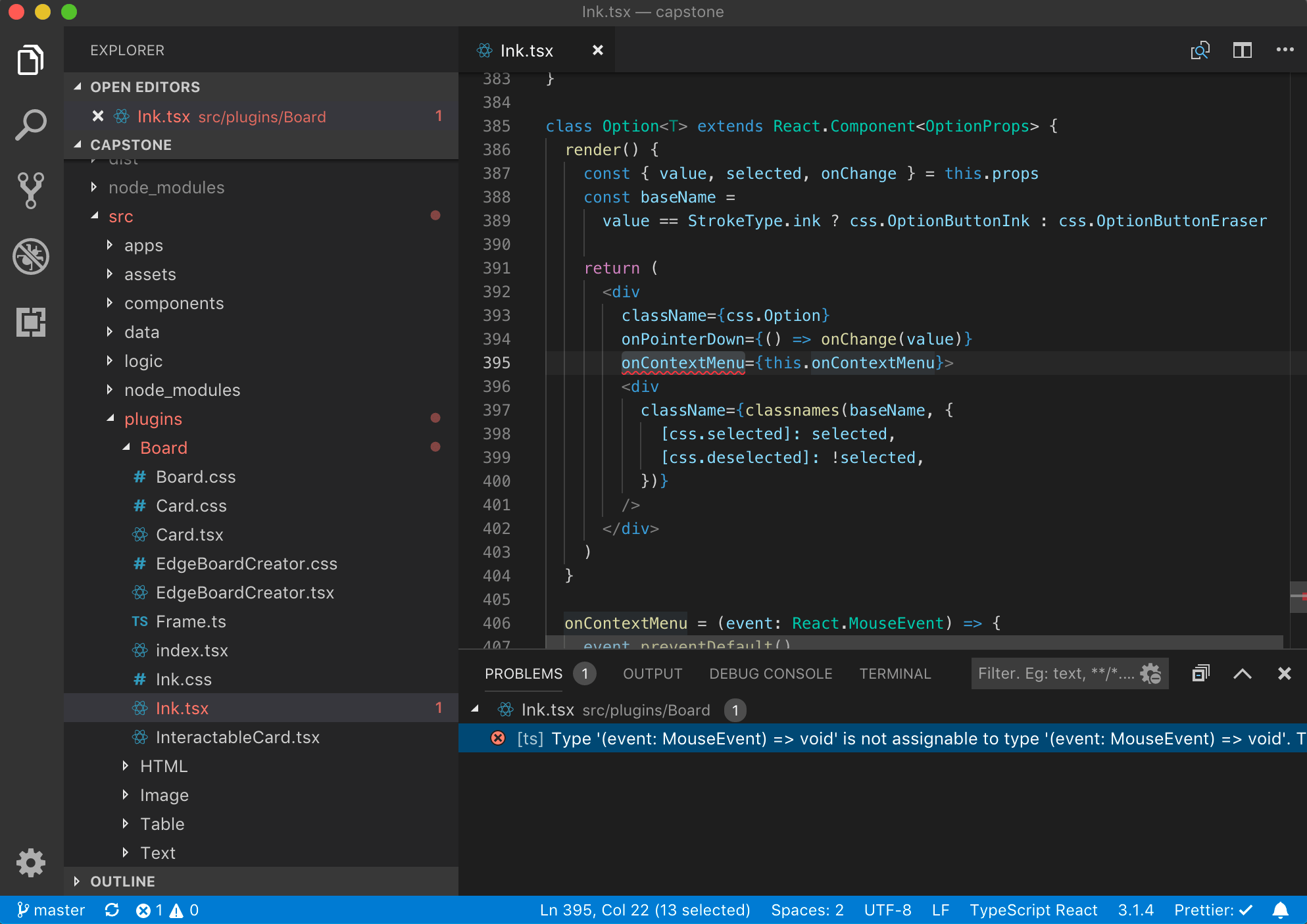

Appendix A: Technology stack

Capstone uses

Typescript

Having chosen to build on the web stack, Javascript is the default language. Our past experience with larger

We were happy with Typescript. The type-checking caught all kinds of deep bugs that saved us headaches later. On the other hand, sometime it led to large investments of time in trying to figure out just the right type model.

We used

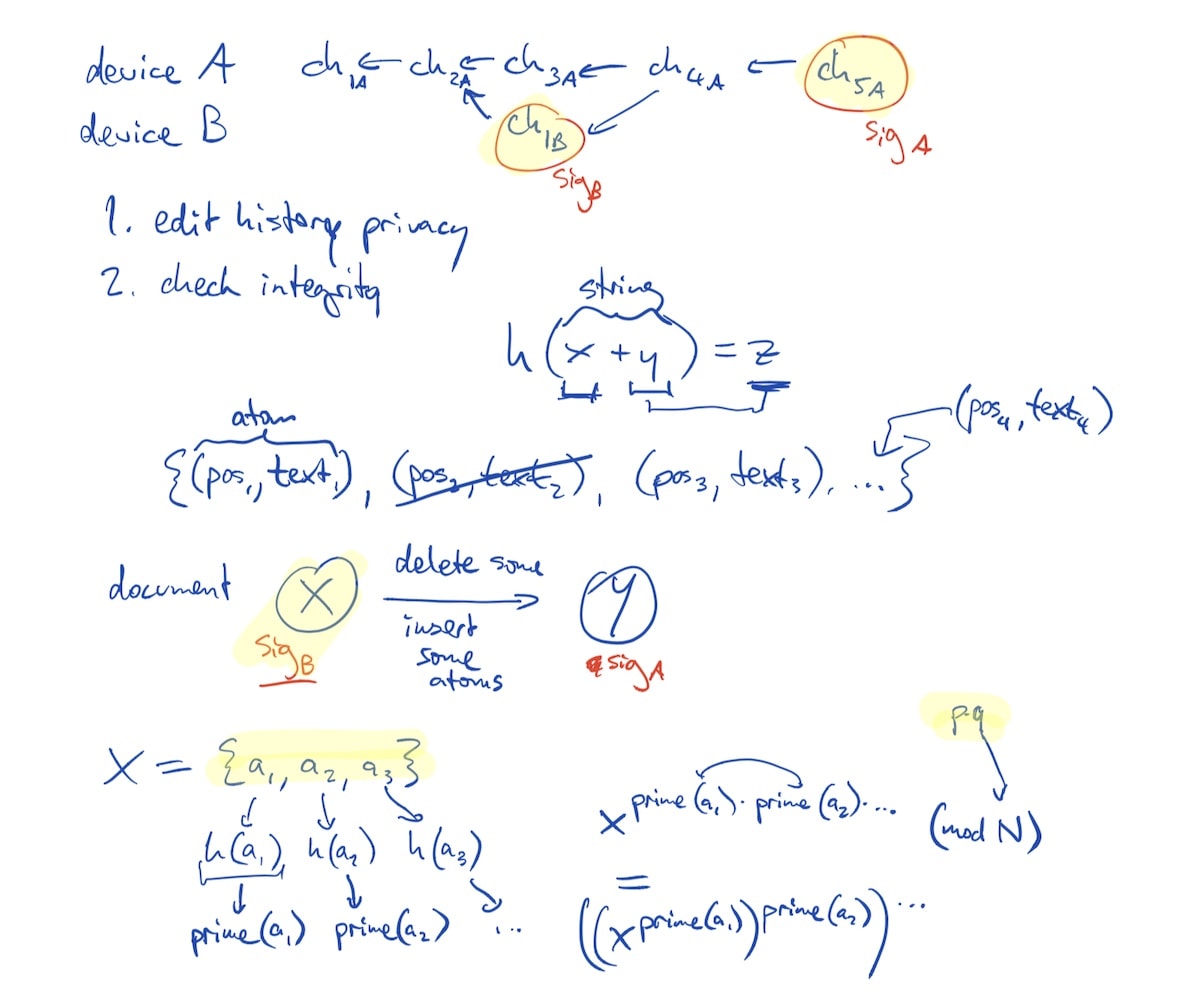

CRDTs, Automerge, Hypercore, and Hypermerge

Ink & Switch and the University of Cambridge computer science department have been developing an open-source, peer-to-peer collaboration layer based on conflict-free replicated data

We combined this technology with the

This choice of storage technology reflected a goal we had for the Capstone project that was never fully realized: realtime collaboration on boards between users, like one can have in a cloud application like Milanote or Figma. But Hypermere enables collaboration without the privacy concerns for the user of sending data through a third party; or the operational cost for the app developer of running cloud infrastructure.

In the end we only used Hypermerge for syncing between the user’s desktop browser extension and Capstone on the tablet (see Shelf as a portal).

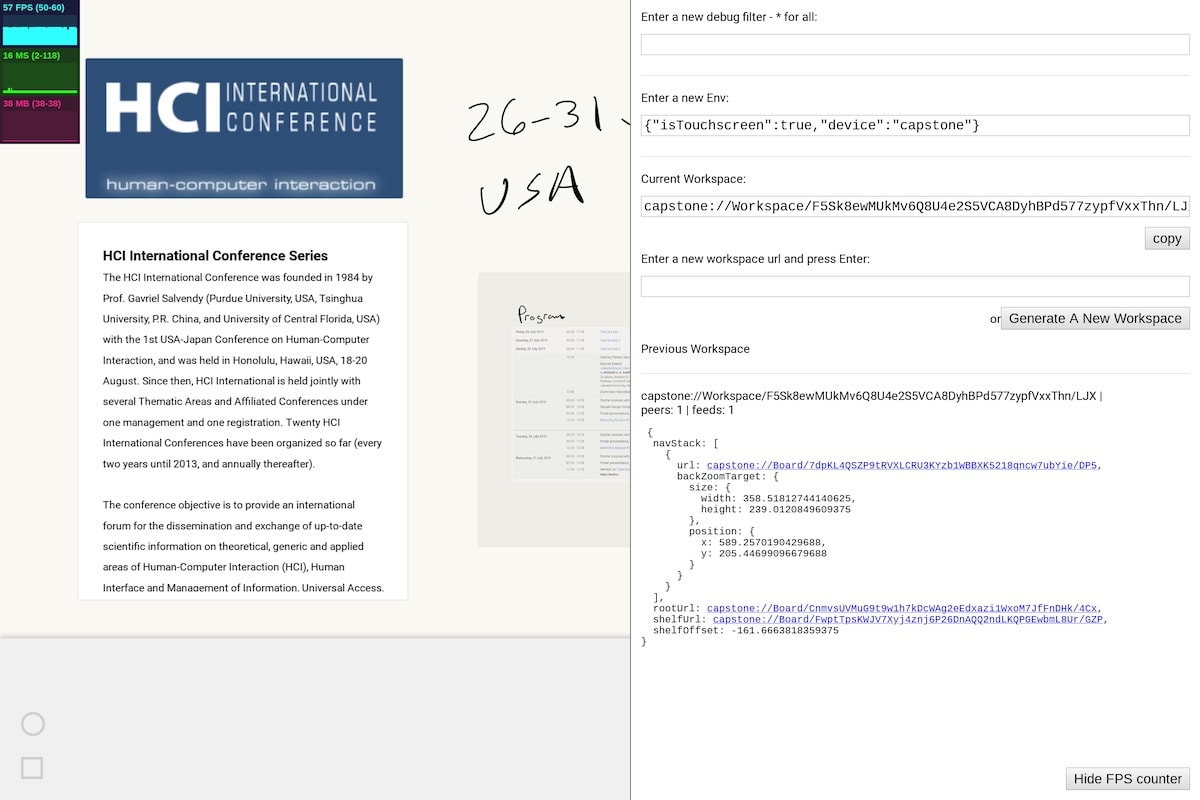

Capstone is already capable of realtime collaboration. You can open a debug panel by putting the tablet into laptop mode, pressing right shift, and copy-pasting the workspace URL into a message to a colleague. If they paste that URL into their Capstone, you’ll be working on the same board.

Debug panel with workspace URLs for realtime collaboration between Capstone users.

The Automerge and Hypermerge libraries developed further during the course of this project. That includes undo

Building on web technologies

One appeal of Chrome OS is that it is designed to run applications built on the web stack (HTML, CSS, the DOM, and Javascript). The web stack has historically had very good developer experience, and our team has had good results building prototypes on

Our hope was that Chrome OS and Chrome Apps would feel like Electron on a dedicated operating system and hardware, sort of an “Electron machine.”

We encountered many engineering problems related to the ways that the Javascript and web standards supported by Chrome Apps differ from those in Node.js, NPM, and standard web browsers. For example the strange and buggy API to localStorage; access to sockets (particularly in our need for fetch() limitations such as refusing Allow-Origin: * (seemingly arbitrary for an app that already has full TCP socket access).

React, the DOM, and transitions and animation

We similarly found that web technologies were a questionable fit for the type of continuous transitions (for example, zoom in and out) we wanted to implement, as well as micro-interactions with motion design and small animations that users of touch platforms have come to expect.

React is for rendering documents, not animations and spatial movement — so it felt like the wrong tool for this kind of application. In web browsers, clicking on a link usually results in a new document being rendered from scratch, and the DOM of the new document might be completely different from that of the previous document. There is no native or obvious way to animate the transition between the two.

Many native app platforms embrace the concept of a navigation hierarchy. For example, iOS offers a navigation

Take a simple example: the user deletes a card, but it should fade to zero opacity over a few hundred milliseconds before being removed from the DOM. In React we do this by adding object state that turns on a CSS class which defines the transition. But we found that doing this frequently resulted in convoluted state objects and lots of added complexity in the render function.

React and the web stack generally do provide some capabilities for animation between state. But there is no direct way to make transitions map directly to the progression of a user’s gesture. Perhaps more significantly, there is not a culture of transitions and motion design for web applications like there is for touch platforms.

Finger and stylus gestures

We started with

Our hybrid finger/stylus gestures were a problem for the web pointer input APIs. Touching the stylus to the screen sends a pointercancel event and no further touch input. This is probably related to palm rejection, but lack of application control on this was frustrating.

We also found ourselves wanting wet vs dry stroke support in the operating system, like the excellent Inking APIs in Microsoft

Overall, the web stack did not serve our needs for this application. We’re open to the possibility that we’re “doing it wrong,” but even our attempts to find web-stack engineers who have the depth of knowledge about transitions and complex gesture input were not successful.

Appendix B: Design language

In designing a product, it’s helpful to start with the “why” of first principles. That leads to the “what” of a design language, which in turn leads to the “how” of particular implementation details.

The table below illustrates our why → what → how model for Capstone.

| Principles | Design language | Implementation |

|---|---|---|

|

|

|

|

|

|

|

|

|

Appendix C: Web page archiving

Capstone’s Clipper browser extension allows the user to save entire web pages into their Capstone tablet’s storage. This proved to a significant technical design challenge.

The goals are simple:

- When the user clips a website, save exactly what they are seeing. They should feel confident that if they look back a day, a week, or a year later they’ll see the same thing.

- Be able to use it offline. If the user is sifting through their saved raw material on a train, in a library, or at a conference with a spotty network, the saved websites should load just the same as text, images, and sketches in Capstone.

Problems with archiving web pages

- Web pages are often behind paywalls, like a subscription news site; or behind a login page, like a private ticketing system such as Github or Jira.

- Web pages often change over time. Think of a stock ticker page, a marketplace listing on a site like eBay or AirBnB, or the front page of a blog.

- Web pages move or go offline over time. While we all love The Wayback

Machine or sometimes the Googlecache , these solutions don’t feel quite right for a personal archive.

Digital preservation on a large scale is a massive challenge. See Archiving the Dynamic

Browser’s File -> Save

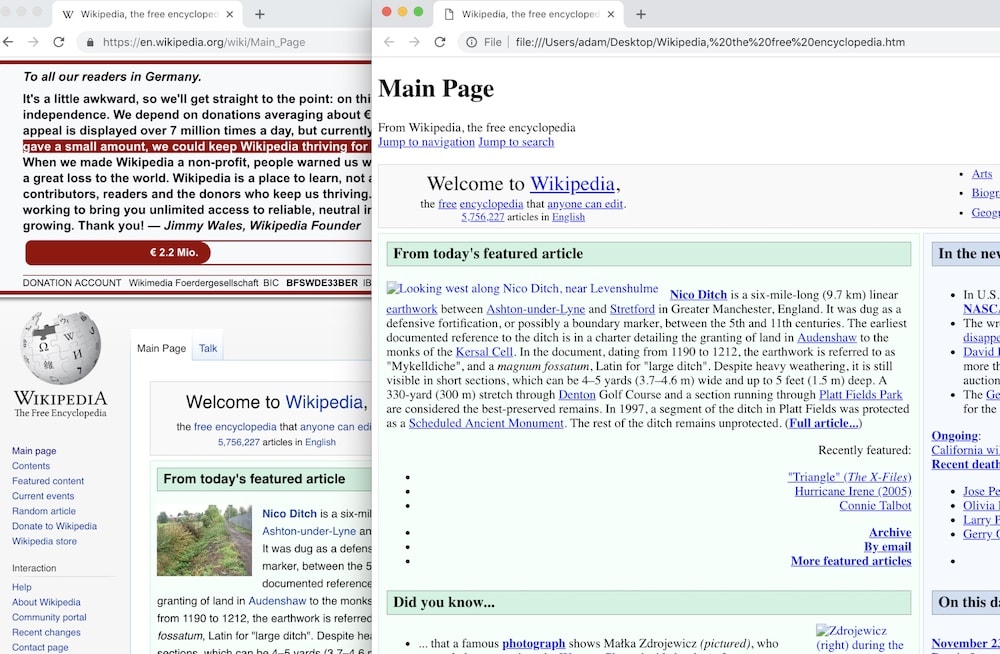

What do browsers suggest as their own archive format? Every major browser has a File -> Save function in its menus and even a hotkey (such as ctrl-S) mapped to it. But despite the prime UI real estate this is given, they barely work at all on the modern web.

The front page of Wikipedia saved from a browser and re-opened in the same browser. While the text is preserved, most users would not consider this a good snapshot.

The save formats are an .HTML file or “Web Page, Complete” which is a zip archive of media on the page. These often don’t reload into the browser reliably. Sites which use lots of dynamic content, absolute URL references to external media, XHR requests, etc are likely to not render at all.

Print to PDF

Another browser function is print to PDF. The output is legible and the PDF format has proven durable over time. But it still bears only a passing resemblance to what the user was seeing when they saved it.

Wikipedia as a PDF.

Screenshots

In the age of mobile apps with content the user cannot directly save or copy, screenshots have become the gold standard for sharing digital content.

It’s a trustworthy format and looks like exactly what the user saw. But so much of the original content is lost: they cannot select, copy-paste, or search the text; they cannot reflow or reformat it for a different screen; in general it loses much of its “webpageness” in the screenshotting process.

Screenshots also tend to include information the user don’t want (such as browser UI or neighboring windows) while losing information they do want (parts of the website which are outside the scroll window).

wget -r

One can use this to convert a dynamic-content site (say, a Wordpress blog) into static HTML that can be hosted nearly anywhere, like S3. As the website owner, this works reasonably well, if you’re willing to spend some time tweaking the output.

But for Capstone’s needs, running a background wget process doesn’t have the browser’s context (login cookies) and it loses dynamic state on the page.

MHTML, WARC, and Webrecorder

A more modern format comes from the

We considered the WARC approach for Capstone. But we saw that the rendering logic is complicated, which goes against the idea of a simple, future-proof format.

Our favorite solution: freeze dry

The solution we settled on for Capstone is

Freeze Dry takes the page’s DOM as it looks in the moment, with all the context of the user’s browser including authentication cookies and modifications made to the page dynamically via Javascript. It disables anything that will make the page change (scripts, network access). It captures every external asset required to faithfully render that and inlines it into the HTML.

Capstone browser extension saves a copy of the web page into the shelf via freeze-dry.

We felt that this is a philosophically-strong approach to the problem. Freeze-dry can save to a serialized .HTML file for viewing in any browser; for Capstone, we stored the clipped page as one giant string in the app’s datastore.

Appendix D: FPS counter and culture of fast software

One of our product/design goals was to make a tool that operates at the speed of thought: the user does not need to wait in order to get their thoughts on a page.

On the product side that means removing steps in a task like ingesting a new piece of media or making a mark on the digital page. On the technology side, it means sustaining 60 frames per second for output, and keeping latency very low (<50ms for most operations).

Framerate

Briefly, most computer displays update themselves 60 times per second, with each of these updates being one frame. Performant software responds to user input and refreshes the display to match in as few frames as possible, and does not skip frames (aka “jank”) when doing heavy computation.

We tried to create a culture of fast software on the Capstone team. This means, first and foremost, awareness for all team members, because you make what you

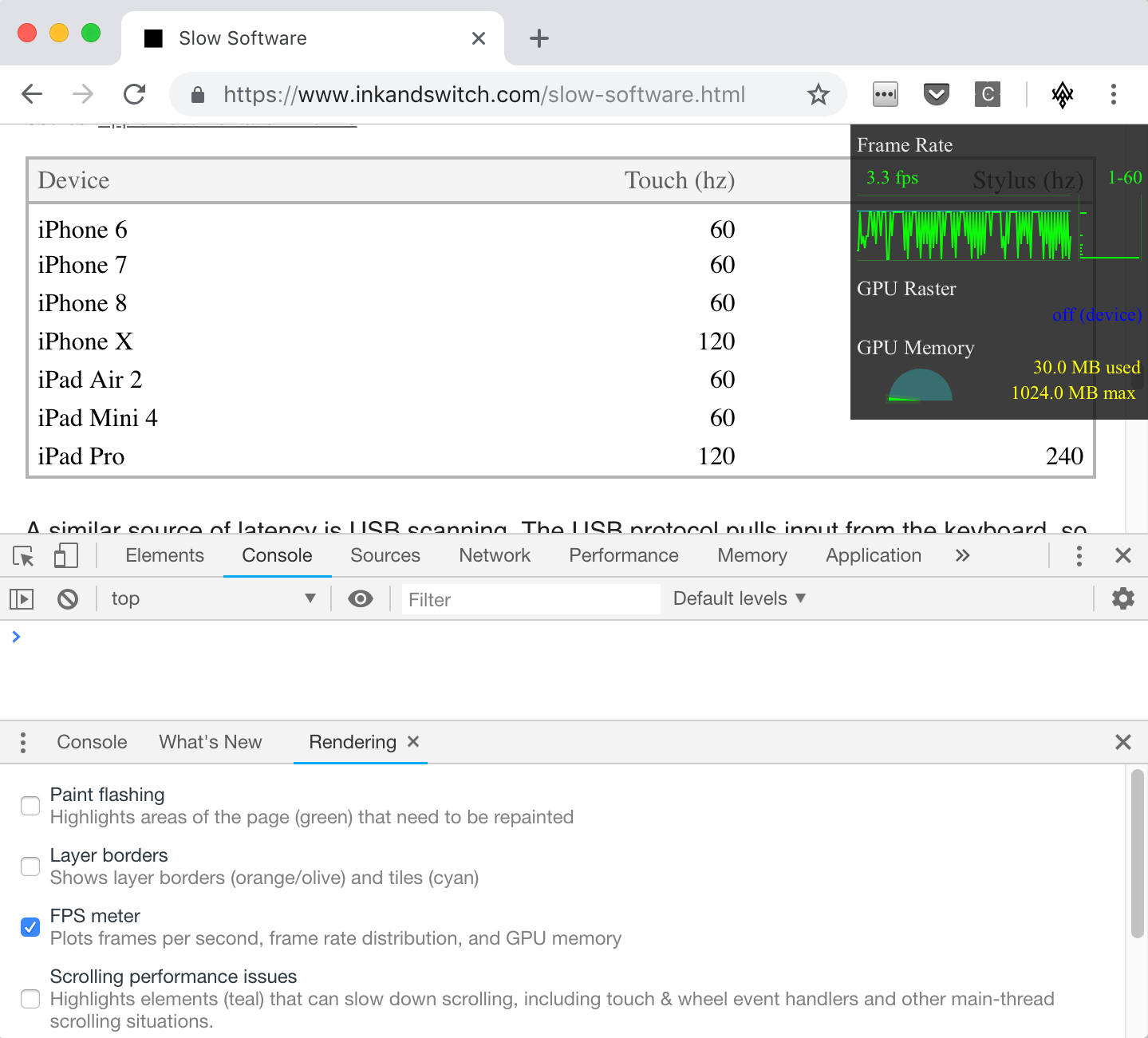

The Chrome Dev Tools FPS counter is a potential place to start:

Chrome Dev Tools framerate monitor floats in the upper right.

In practice, we found this hard to activate team-wide (buried in a submenu, doesn’t preserve state across app restarts) and hard to interpret (FPS counter only updates during refreshes versus when the page is at a standstill).

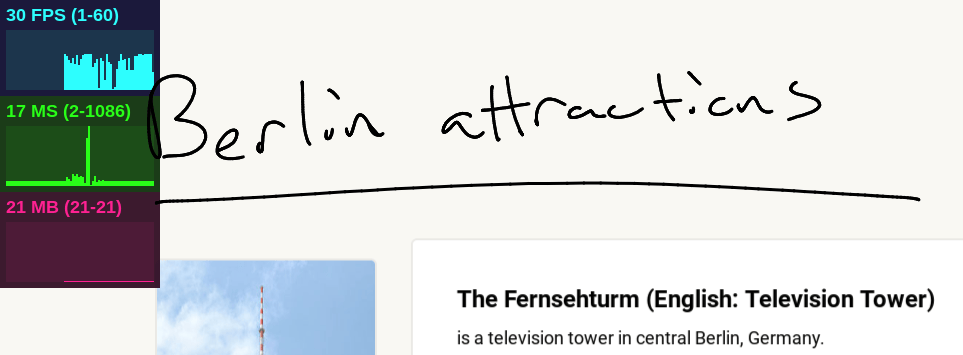

For Capstone we used an FPS counter embedded in the app, using the excellent Stats.js javascript performance

The stats.js FPS counter. Enable in Capstone via the right-shift debug menu.

Platform choice

Experienced software developers know that performance of an app or codebase tends to be the best when you start out, and only go downhill from there. The dual arguments of “we’ll optimize it later” and “that’s just a performance optimization” are logically compelling but empirically weak.

Platform choice matters a lot. Worse-performing languages and runtimes reduce the performance the user experiences for the comfort of the developer. There’s a good argument to be made for this tradeoff (hardware is cheap, programmers are

But this is how we get to a place where computers are faster than ever, and yet loading a chat program on a top-of-the-line workstation takes 45

We had some concerns in choosing the web stack, which has historically optimized for developer experience over application performance. But the particular subset of web technologies seemed promising:

- Chrome OS comes from Chrome and Google which have an incredible history of performant software culture. The Chrome Dev Tools performance section including profiling and flame graphs offer a great built-in experience.

about:tracing lets you go even deeper. - Javascript has been shown to be capable of performance with V8 and Node.js.

- Reactive

programming generally and Reactspecifically offer a logically good model for optimizing software based around responding to user input.

In practice, it did not live up to our hopes. We labored extensively to keep performance up with techniques such as using

Video games

Video games are a subset of the computing industry that has a strong culture of performance. One might say that action games like Pac-Man, Quake, or Destiny are speed-of-thought tools.

Video game developers are part of this equation, but so are hardware manufacturers with entire brands built on low-latency input

Even end users of video games (aka players) are aware of framerates, with FPS counters built into gaming

So the question is, why do developers of productive and creative tools not have this same awareness? In our anecdotal experience, many software engineers with a web or mobile background are only dimly aware of framerate as a concept, let alone know whether their own apps are maintaining a steady framerate.

Or to put it another way: why does a text editor struggle when video game engines can render a full-detail 3D city without slowing

We don’t know the exact solution here, but we’d like to propose all of us doing productivity app development might want to take a few cues from video game performance culture.